Are you sure you want to delete this task? Once this task is deleted, it cannot be recovered.

|

|

1 year ago | |

|---|---|---|

| .github/workflows | 2 years ago | |

| .vscode | 3 years ago | |

| cmake | 2 years ago | |

| docker | 2 years ago | |

| src | 1 year ago | |

| test | 1 year ago | |

| third_party | 2 years ago | |

| .dockerignore | 2 years ago | |

| .gitignore | 2 years ago | |

| .gitmodules | 3 years ago | |

| CMakeLists.txt | 2 years ago | |

| LICENSE | 2 years ago | |

| README.md | 2 years ago | |

README.md

About

prpc is an RPC framework that provides network communication for high-performance computing, with components such as accumulator.

Build

Docker Build

docker build -t 4pdosc/prpc-base:latest -f docker/Dockerfile.base .

docker build -t 4pdosc/prpc:0.0.0 -f docker/Dockerfile .

Ubuntu

apt-get update && apt-get install -y g++-7 openssl curl wget git \

autoconf cmake protobuf-compiler protobuf-c-compiler zookeeper zookeeperd googletest build-essential libtool libsysfs-dev pkg-config

apt-get install -y libsnappy-dev libprotobuf-dev libprotoc-dev libleveldb-dev \

zlib1g-dev liblz4-dev libssl-dev libzookeeper-mt-dev libffi-dev libbz2-dev liblzma-dev

mkdir build && cd build && cmake .. && make -j && make install && cd ..

Design

Client

- Initialize

RpcServiceand register onMaster. - The

Masterreceives the registration request and returns the globalRpcServiceinformation, including the number, address, and service registered on the node. RpcServicecreatesFrontEndfor each service node based on the returned information.FrontEndwill only connect to the target node when the information needs to be sent, and manage the connection to ensure the reliability of the service.- If the server is also on this node, the message can be moved to the server directly, otherwise it needs to go through TCP or RDMA network.

- Send the message to the target service node.

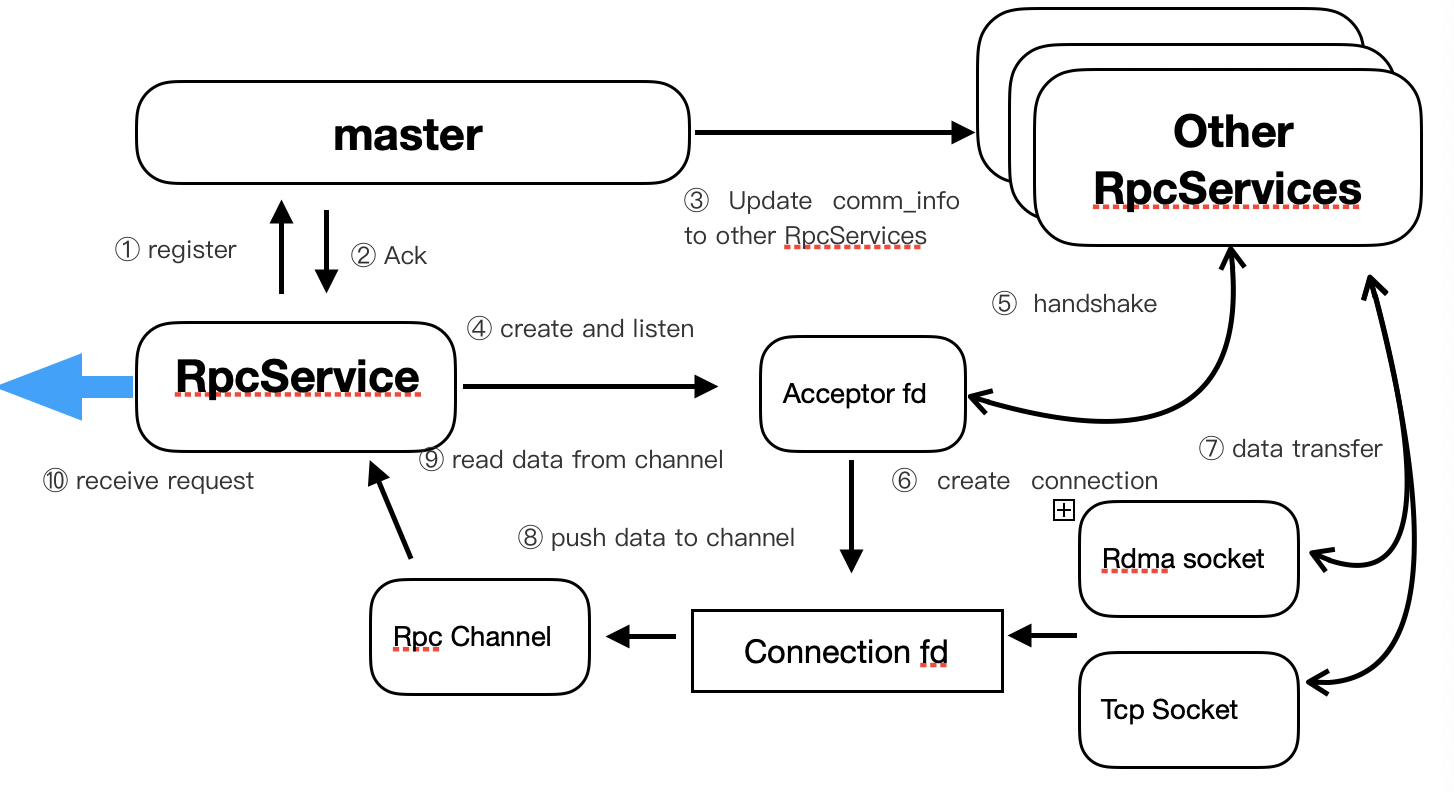

Server

- Initialize

RpcServiceand register onMaster. - After receiving the registration request, the

Masterwill return a confirmation message. - Then

Masterwill broadcast the node's rank, address and services to all nodes. RpcServicecreates and continues to listen the acceptor fd.- Other nodes connect to this node.

- For each new listened connection, a new connection fd will be established to send and receive data according to the node configuration and network topology.

- Other nodes send messages to this node through TCP, RDMA protocols.

- After the connection fd receives the message, the background receiving thread will be notified and the message will be filled into

RpcChannel. - Get the message from

RpcChannelthroughrecv_request(). - Complete message reception.

FrontEnd

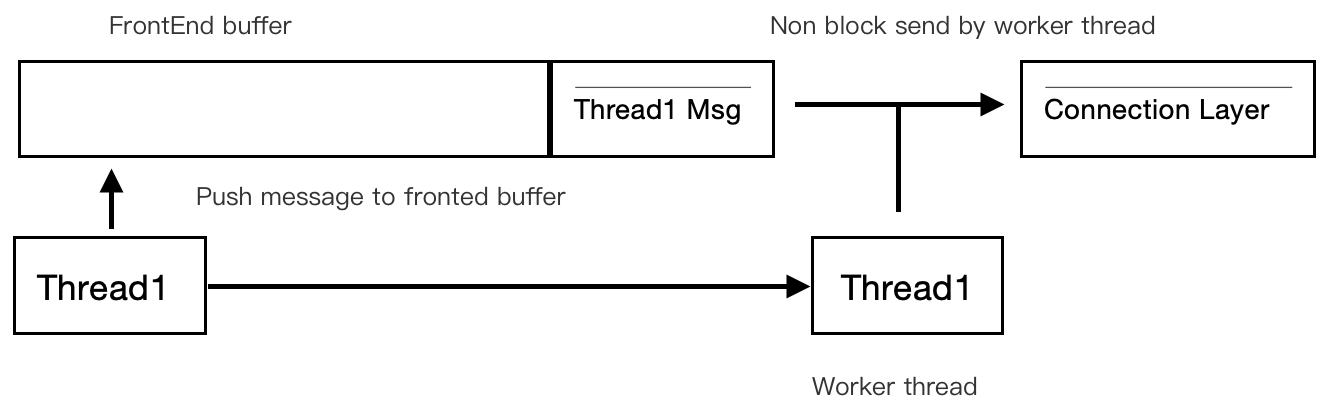

In order to optimize response time and communication efficiency, a non-blocking mode is adopted for message sending, and the specific implementation is as follows:

FrontEnd uses a thread-safe buffer (multiple producers and single consumer). When a thread (Thread1) sends a message, it first pushes the message into the buffer. If there is no other messages in the buffe, the thread will send all messages pushed to the buffer until the buffer is cleared. This thread is called working thread.

When an other thread (Thread2) sends a message, after its push message enters the buffer, if it detects that the corresponding buffer already has a worker thread (Thread1), it can return directly, and the message is sent by the worker thread.

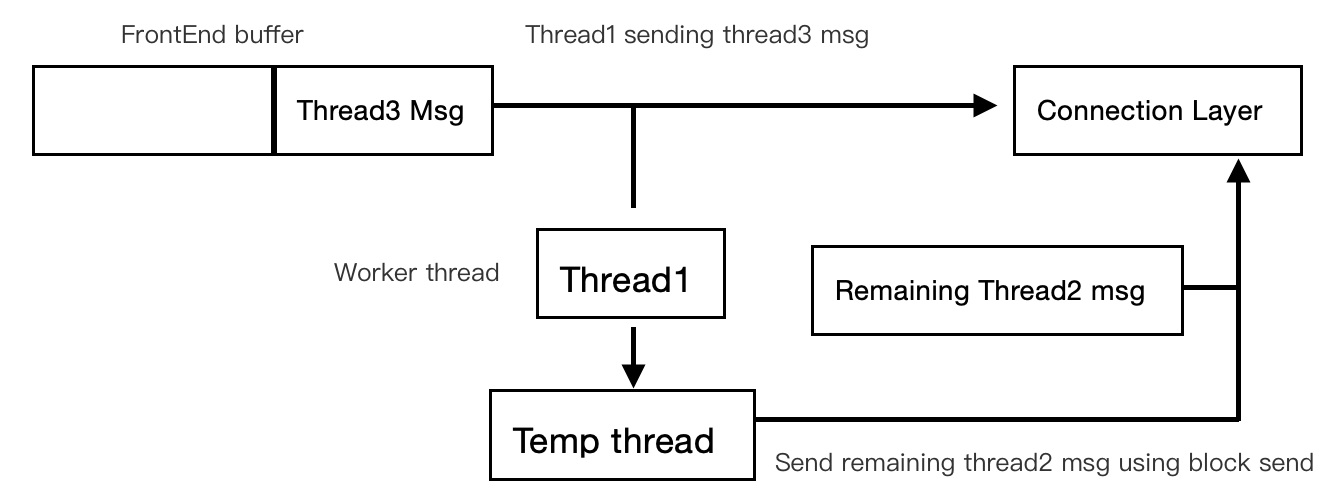

Since the non-blocking system call is used when sending data, if a message is too large to be completely sent in one system call, in order to ensure response time, the worker thread will create a temporary thread to send the remaining content, and continue to process the next message in buffer.

In this way, thread safety is ensured when messages are sent by multiple threads, context switching, memory copying are minimized, and CPU cache misses are avoided as much as possible.

Exception

The exception handling process of client‘s send_request is shown in the following figure:

When the sending of FrontEnd fails due to network or other reasons, it will set its current status to EPIPE and try to find an other FrontEnd registered with the same service. If found, the message will be forward to it, otherwise an error response will be returned immediately.

More Documents

An RPC framework that provides network communication for high-performance computing, with components such as accumulator.

C++ Markdown CMake Text other