PanGu-α Introduction

中文|English

PanGu-α is proposed by a joint technical team headed by PCNL. It is the first large-scale Chinese pre-trained language model with 200 billion parameters trained on 2048 Ascend processors using an automatic hybrid parallel training strategy. The whole training process is done on the "Peng Cheng Cloud Brain II" computing platform with the domestic deep learning framework called MindSpore. The PengCheng·PanGu-α pre-training model can support rich applications, has strong few-shot learning capabilities, and has outstanding performance in text generation tasks such as knowledge question and answer, knowledge retrieval, knowledge reasoning, and reading comprehension.

[Technical report]

[Model download]

[Model compression]

[Model application]

[GPU inference and finetune]

[Corpus collection and processing]

[MindSpore official website]

[Join WeChat communication group]

[License]

Key points

- The first Chinese autoregressive language model "PengCheng·PanGu-α" with 200 billion parameters

- Code and model are gradually released

- The first sequential autoregressive pre-training language model ALM

- The ultra-large-scale automatic parallel technology in MindSpore

- The model is trained based on the domestic full-stack software and hardware ecosystem(MindSpore+CANN+Atlas910+ModelArts)

Dataset

Massive corpus is the basis of pre-training language model research. The PanGu-α team collected nearly 80TB raw data from the open datasets such as common crawl web page data, e-books, etc.

We build a distributed cluster for large corpus preprocessing and constructed a 1.1TB high-quality Chinese text corpus through data cleaning, filtering, de-duplication, and quality evaluation. The corpus contains about 250 billion tokens. The label information related to downstream tasks is completely removed to ensure the unbiased nature of the source data.

Model structure

We design the query layer on top of the stacked Transformer layers, which aims to explicitly induce the expected output.

In the pretraining stage of the autoregressive model, it comes to the prediction of the next token.

MindSpore automatic parallelism

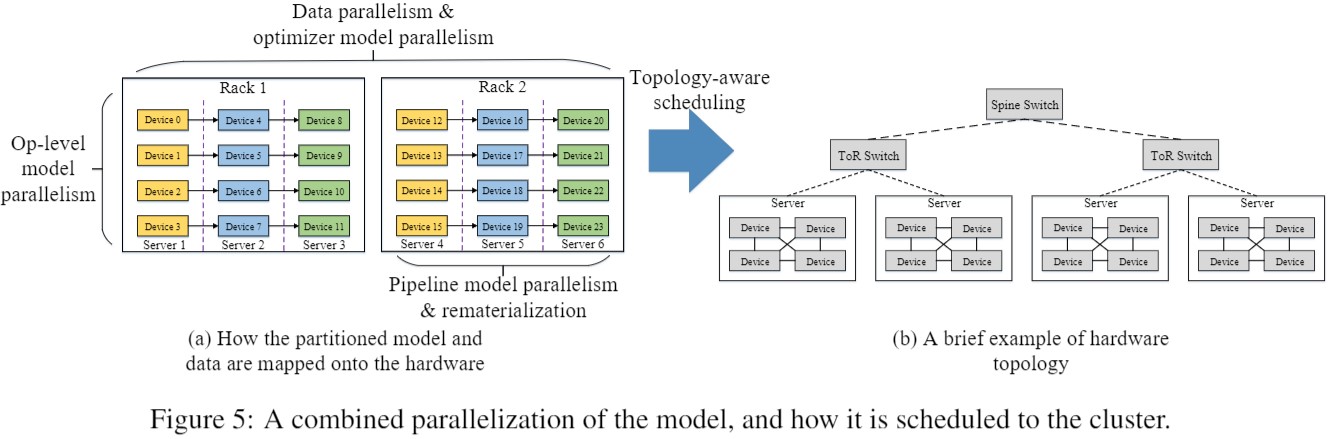

To efficiently train a model with hundreds of billions to trillions of parameters in large-scale clusters, users need to comprehensively consider the number of parameters, calculations, calculation types, cluster bandwidth topology, and the number of samples to design a partitioning strategy with better performance. The model code writing should consider not only the algorithms but also a large number of partition and communication strategies.

MindSpore is the industry's first framework to support fully automatic parallelism. MindSpore multi-dimensional automatic parallelism technique minimizes the overall computing time through data parallelism, operator-level model parallelism, pipeline model parallelism, optimizer model parallelism, heterogeneous parallelism, recalculation, efficient memory reuse, and Topology-aware scheduling. Each parallelism dimension trades computation (or communication) overheads for memory (or throughput) benefits. To acquire maximum end-to-end throughput, a balanced composition point should be found along these dimensions. The problem becomes more challenging when considering the heterogeneous bandwidths in a cluster of devices.

| Hardware platform |

Number of devices |

Operating system |

Cluster management |

Framework |

| Ascend 910 |

2048 |

EulerOS-aarch64 |

ModelArts️ |

MindSpore |

Model download

When training on a large-scale cluster, the models are saved in fragments. The download links of the 2.6B and 13B models are as follows:

| Model |

size |

source |

md5 |

| PengCheng·PanGu-α-2.6B |

2.6G |

2.6B_part0 |

3904bb0e551f36206cfca96ab0f63cba |

| PengCheng·PanGu-α-2.6B |

2.6G |

2.6B_part1 |

19407cbc33ee4929d68f500e015869cc |

| PengCheng·PanGu-α-2.6B |

2.6G |

2.6B_part2 |

9ce140312f962079e13f91f681a269ad |

| PengCheng·PanGu-α-2.6B |

2.6G |

2.6B_part3 |

290158ffc426a89b7e357018c701e71d |

| PengCheng·PanGu-α-2.6B |

391M |

2.6B_word_embedding |

9ca91161bde9c089dd8304db0428455e |

| PengCheng·PanGu-α-2.6B |

10M |

2.6B_top_query_embedding |

320b401050319c1c63117c36b93651c1 |

| PengCheng·PanGu-α-2.6B |

10M |

2.6B_position_embedding |

bf7eb84b7b9fd6004cb22260883ab080 |

| PengCheng·PanGu-α-13B |

12G |

13B_part0 |

724bdaf87e0eb4aa9ca0f35e8af92464 |

| PengCheng·PanGu-α-13B |

12G |

13B_part1 |

493579a1ccc2a16a616cf04daedd031f |

| PengCheng·PanGu-α-13B |

12G |

13B_part2 |

de4e8a023c56b7134bff4dfbcc230871 |

| PengCheng·PanGu-α-13B |

12G |

13B_part3 |

6a6bcfdadf5a0d23c567d6959bf4eaba |

| PengCheng·PanGu-α-13B |

781M |

13B_word_embedding |

a4e0c5893d255500396dfa1bfe7ba3ba |

| PengCheng·PanGu-α-13B |

20M |

13B_top_query_embedding |

4d75c3b42f85db825cebdc99329160bd |

| PengCheng·PanGu-α-13B |

20M |

13B_position_embedding |

299ee4174cc4de7fdebec1c3e631e348 |

| PengCheng·PanGu-α-200B |

~T |

~ |

|

Environmental requirements

| Hardware platform |

Operating system |

Framework |

2.6/13B inference equipment quantity |

200B inference equipment quantity |

| Ascend 910 |

EulerOS-aarch64 |

MindSpore |

8 |

64 |

Environmental requirements

mindspore

jieba 0.42.1

sentencepiece 0.1.94

Training

Run the following command to start training, MODE can be set as 2.6B, 13Bor 200B.

export MODE=2.6B

bash scripts/run_distribute_train.sh 8 /home/data/ /home/config/rank_table_8p.json $MODE

NPU inference

Firstly, download the following three files

- Tokenizier: Download vocal.vocab and vocab.model in the “$FILE_PATH/tokenizer/” directory;

- Model: Download the model file in the “$FILE_PATH/checkpiont_file/” directory;

- Strategy file: This file describes the partitioning strategy of the model. The file is located in the ”$FILE_PATH/strategy_load_ckpt/“ directory.

Inference

$FILE_PATH=/home/your_path

bash scripts/run_distribute_predict.sh 8 /home/config/rank_table_8p.json ${FILE_PATH}/strategy_load_ckpt \

${FILE_PATH}/tokenizer/ ${FILE_PATH}/checkpiont_file filitered

Model compression

Compress PengCheng·PanGu-α-13B from 8 cards to 1 card for efficient inference. [see]

Model application

The application technology of Pengcheng·Pangu-α provides baseline from basic technology to application, and promotes the application innovation of model. [see]

GPU inference and finetune

PengCheng·PanGu-α inference and finetune on GPU. [see]

Task description

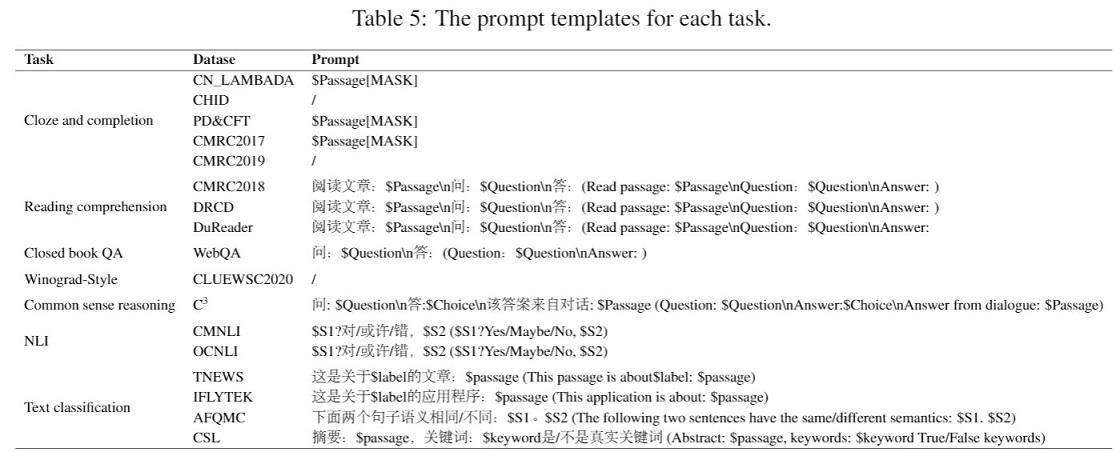

In order to evaluate the performance of the model, the team collected 16 different types of Chinese downstream tasks as shown in the following table:

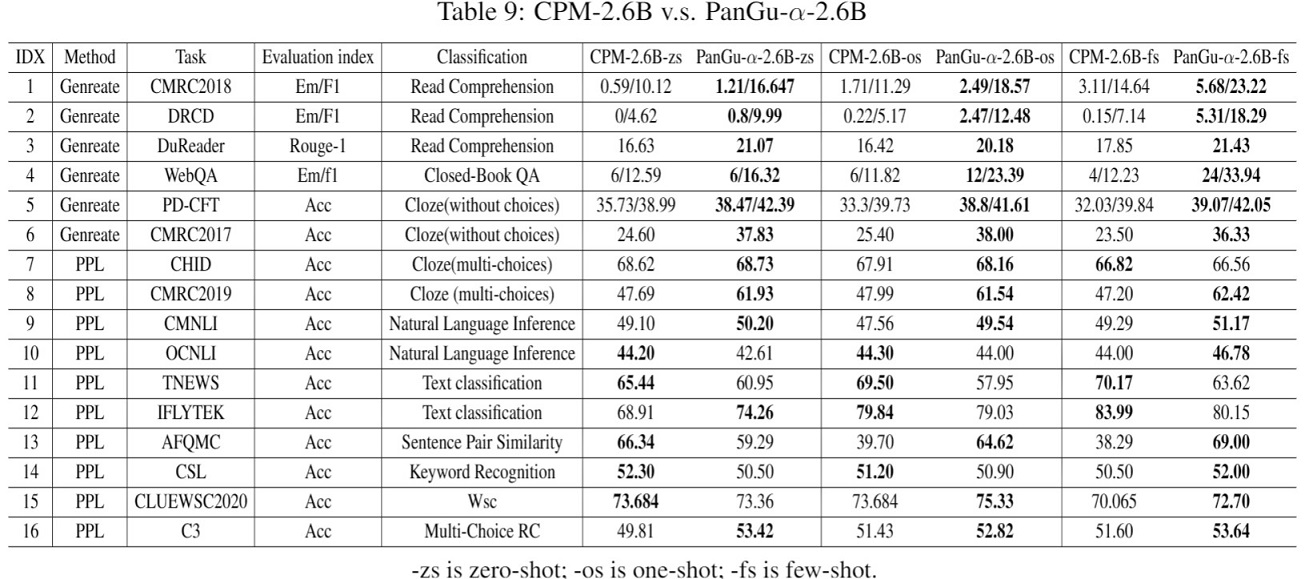

Due to the lack of Chinese benchmarks for few-shot learning, we compare PanGu-α with the Chinese pre-training language model CPM-2.6B released by Zhiyuan Research Institute. Specifically, the PanGu-α-2.6B model was trained using 100GB data sampled from the 1.1TB data and compared with CPM-2.6B on 16 downstream tasks. The results are shown in the following table:

The experimental results show that PanGu-α-2.6B achieves higher performance compared to CPM-2.6B on more than 11 tasks in zero-shot setting, 12 tasks on the one-shot setting, and 14 tasks on the few-shot setting. In general, the experimental results indicate that PanGu-α-2.6B achieves higher in-context learning ability over CPM-2.6B, especially for few-shot learning and generation-tasks. Regarding generation-tasks, PanGu-α-2.6B outperforms CPM-2.6B with an improvement of 6 points on average. To be more specific, PanGu-α-2.6B surpasses CPM-2.6B with 5 points in scores for both reading comprehension and closed-book QA tasks, 7 points in scores for cloze (without choices) tasks respectively. Regarding perplexity-tasks, PanGu-αis comparable to CPM-2.6B on natural language inference with CMNLI and OCNLI datasets, while it is slightly worse than CPM on classification tasks with TNEWS and IFLYTEK datasets. We suppose that the main factor that contributes to the different performance of CPM-2.6B and PanGu-α-2.6B is the training data. We collect massive and diverse data from a wide range of sources, which allows our PanGu-α model to handle more diverse tasks.

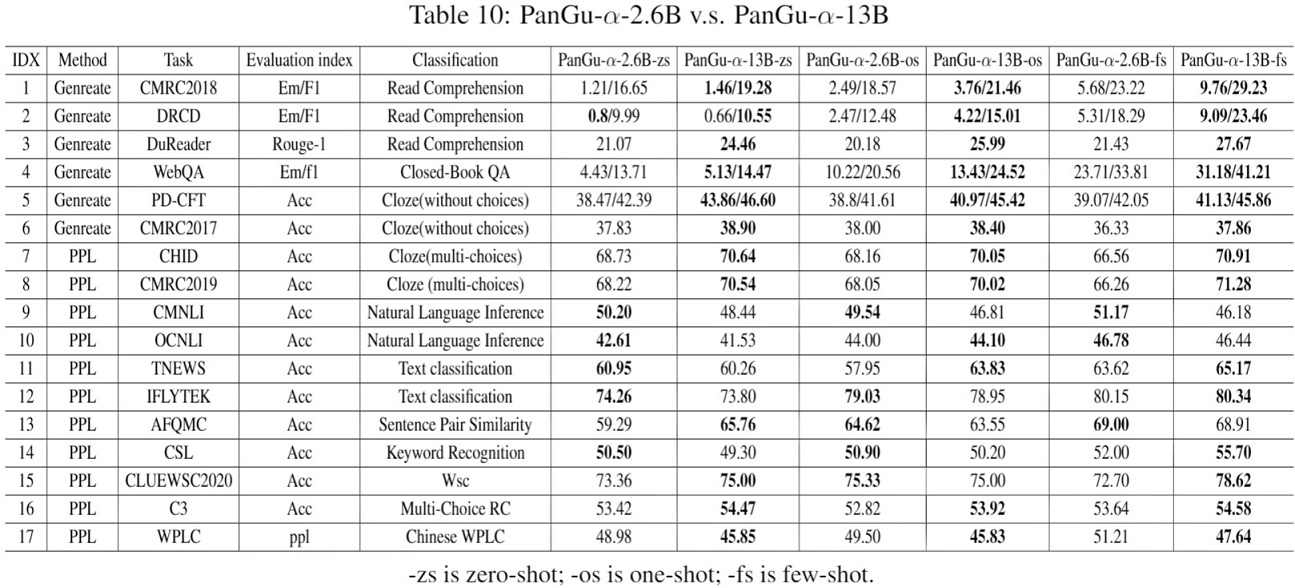

The team also compares PanGu-α-13B with PanGu-α-2.6B. PanGu-α-13B outperforms PanGu-α-2.6B on all generation-tasks and most of the perplexity-tasks. Regarding CMRC2018, DRCD and WebQA tasks of PanGu-α-13B, the few-shot performance surpasses zero-shot by more than 10 points, demonstrating that PanGu-α-13B has superior in-context learning ability.PanGu-α-13B outperforms PanGu-α-2.6B with an improvement of 3 points on average. To be more specific, PanGu-α-13B surpasses PanGu-α-2.6B with 4 points for both reading comprehension and closed-book QA tasks, 2 points for cloze (without choices) task respectively.The model file size of Pengcheng Pangu α-200B is in the terabyte range, and the downstream task inference is time-consuming and labor-intensive, and there is more room for optimization and acceleration. The team is working together to complete the inference and evaluation, and synchronize the relevant research results as soon as possible.

Examples of generations:

Input: 上联:欢天喜地度佳节 下联:

Generate: 花好月圆庆团圆

Input: 小红是小明的姐姐,小红的年纪比小明

Generate: 大

Input: 中国和美国和日本和法国和加拿大和澳大利亚的首都分别是哪里?

Generate: 中国的首都是北京,美国的首都是华盛顿,日本的首都是东京,法国的首都是巴黎,澳大利亚的首都是堪培拉。

Input: 四川的省会是哪里?

Generate: 成都,简称蓉,别称蓉城、锦城、天府之国,是四川省省会、副省级市、特大城市、中国西部地区重要的中心城市、国家重要的现代制造业基地、西南地区综合交通枢纽。

Input: 李大钊如果在世,他会对今天的青年人说:

Generate: “如果我们多读点书,多观察一点国家社会的实况,遇事能多些思考,多些辨别,就不会被清和军阀的残暴统治所吓倒,不被他们那一套盲目排外的舆论所迷惑。”“我们要组织大批的有志青年,拿起笔来,到学校里去,把我们的眼睛,耳朵,都用到学校上去,用到社会上去,使我们的思想和行动,都能反映出全国人民的意志,作为我们行动的指南。”

#作者:风吹走的名字链接:https://www.zhihu.com/question/313316339/answer/623572659来源:知乎著作权归作者所有。

Input: 书生:羌笛何须怨杨柳,春风不度玉门关。

飞云:(这诗怎么这么耳熟?且过去跟他聊聊如何。)

书生:小兄弟,要不要一起喝一杯?

飞云:你请我呀?你若是请我,我便和你喝一杯;你若不请我,我便一个人去喝。

书生:小兄弟,看你年纪轻轻,不至于这么势利吧?

飞云:势利如何说起?你请我喝了酒,我自然要记你的人情;我欠了你的人情,日后你有求于我,我自然要报答你,这样一来大家都不吃 亏,不过你的好处要在后头得到而已。

书生:小兄弟言之有理,不过在下有一点不太明白,那就是为什么你不愿意先请在下喝了酒待日后在下回报于你呢?

Generate1:你若是愿意先请我喝了酒,待日后我回报于你,你便有了收获,你岂不是更先欠下我的人情了吗?

飞云:这是自然的,我也说过了,我的确是不喜欢欠别人的人情,更不喜欢别人欠我的人情,那便是把好处在后头得到了。

书生:小兄弟,我是一介书生,你在座的各位都是我的衣食父母,你们如果都像我一样想要什么,要不到了我,那你们会像我一样被饿死、被寒冷冻死吗?

飞云:那是因为在座的各位都是饥寒交迫之人,都是饿得骨瘦如柴之人,而我却是经常吃饱喝足、锦衣玉食、养尊处优之人,这正是所谓的乐施善心,积德行善之人。

书生:小兄弟,你是不是因为这样才不愿意欠下我的人情?

飞云:不是,因为我知道,你如果不愿意先请我喝了酒待日后我回报于你,那么我便不能报答你。而我不愿意欠别人的人情,那我便会将自己的美好的善行全部都奉献给你,以报答你对我的关怀和帮助。

书生:小兄弟,不知你用什么方法找到这么多可敬可爱的人们的?

飞云:呵呵,说来惭愧,我找他们可不是什么难事,我认识他们的时候,他们也还没有这么大的势力,我只是为了帮他们找到一条可以生存的路,从他们那里换到了一些粮食。只是那时候我对他们的帮助确实太少了,所以最后他们都没有答应我的要求,也没有向我索要东西,甚至于没有等到我的帮助便饿死了。

书生:小兄弟,我看你年纪轻轻,怎么会有如此崇高的思想境界呢?又是从哪里学来的呢?你说来听听。

飞云:这倒说不上,只不过是我发现了很多常人做不到的事情,所以就学会了很多常人不会去做的事情,所谓饮水思源,这便是其中的一个原因。

书生:你说的是不是为了一些自己不知道的事物,而发奋图强?

飞云:是啊,我一直都

Generate2:飞云:咳,年轻人说的话要有选择性,我既然说了我先请你,你却又说一个人喝一杯,可见我是有目的的,要是小兄弟真是不肯给在下好处,也可以不请我,到时候我便跟你说我请 了你,你就欠了我一个人情.若是小兄弟答应我,日后我自然会等你。

书生:啊,我明白了,这样就不会让你和我喝酒了。

飞云:对对,小兄弟是有诚信的人,不如这样,你叫在下这样做,我也是这样做,我们就到此为止,日后互不相欠。

书生:这...难道小兄弟不明白我的意思?

飞云:我当然明白。

书生:那我就明白了。

飞云:怎么?难道你还不明白我的意思吗?

书生:小兄弟,你相信我了?

飞云:嗯。

书生:那你应该明白我的意思,我不是不给你报酬的人,只是我不希望你因为我而耽误了自己的前程,你明白我的意思吗?

飞云:我当然明白你的意思。

书生:嗯,这一点我又何需多言?其实刚才我不是也说过了吗?我是一个有诚意的人,我很感谢你能让我明白了这么多,不过小兄弟你既然已经明白了我的意思,就表示你愿意接受我的这份礼物,我说到做到,你看怎么样?

飞云:哦,那太好了,以后我若有什么困难,你就可以来找我了。

书生:嗯,小兄弟,说得好。既然你已经接受了我的这份礼物,我也没有什么好说的了,不过你也要答应我,以后在没有我的允许下,你一定要记住,除了我,千万不要在别人面前提起你的家人。

飞云:嗯。

书生:我走了。

飞云:小兄弟慢走,来日再见。

WeChat group

Add to PengCheng·PanGu-α WeChat communication group:

Project information

PCNL, Huawei MindSpore, Huawei Noah's Ark Laboratory and Peking University are the major members of the PengCheng·PanGu-α joint development team.

License

Apache License 2.0