Are you sure you want to delete this task? Once this task is deleted, it cannot be recovered.

|

|

3 years ago | |

|---|---|---|

| android | 3 years ago | |

| core | 3 years ago | |

| data | 3 years ago | |

| mAP | 4 years ago | |

| scripts | 3 years ago | |

| CODE_OF_CONDUCT.md | 4 years ago | |

| LICENSE | 4 years ago | |

| README.md | 3 years ago | |

| benchmarks.py | 3 years ago | |

| convert_tflite.py | 3 years ago | |

| convert_trt.py | 3 years ago | |

| detect.py | 3 years ago | |

| detectvideo.py | 3 years ago | |

| evaluate.py | 3 years ago | |

| requirements-gpu.txt | 3 years ago | |

| requirements.txt | 3 years ago | |

| result-int8.png | 4 years ago | |

| result.png | 4 years ago | |

| save_model.py | 3 years ago | |

| train.py | 3 years ago | |

README.md

tensorflow-yolov4-tflite

YOLOv4, YOLOv4-tiny Implemented in Tensorflow 2.0.

Convert YOLO v4, YOLOv3, YOLO tiny .weights to .pb, .tflite and trt format for tensorflow, tensorflow lite, tensorRT.

Download yolov4.weights file: https://drive.google.com/open?id=1cewMfusmPjYWbrnuJRuKhPMwRe_b9PaT

Prerequisites

- Tensorflow 2.3.0rc0

Performance

Demo

# Convert darknet weights to tensorflow

## yolov4

python save_model.py --weights ./data/yolov4.weights --output ./checkpoints/yolov4-416 --input_size 416 --model yolov4

## yolov4-tiny

python save_model.py --weights ./data/yolov4-tiny.weights --output ./checkpoints/yolov4-tiny-416 --input_size 416 --model yolov4 --tiny

# Run demo tensorflow

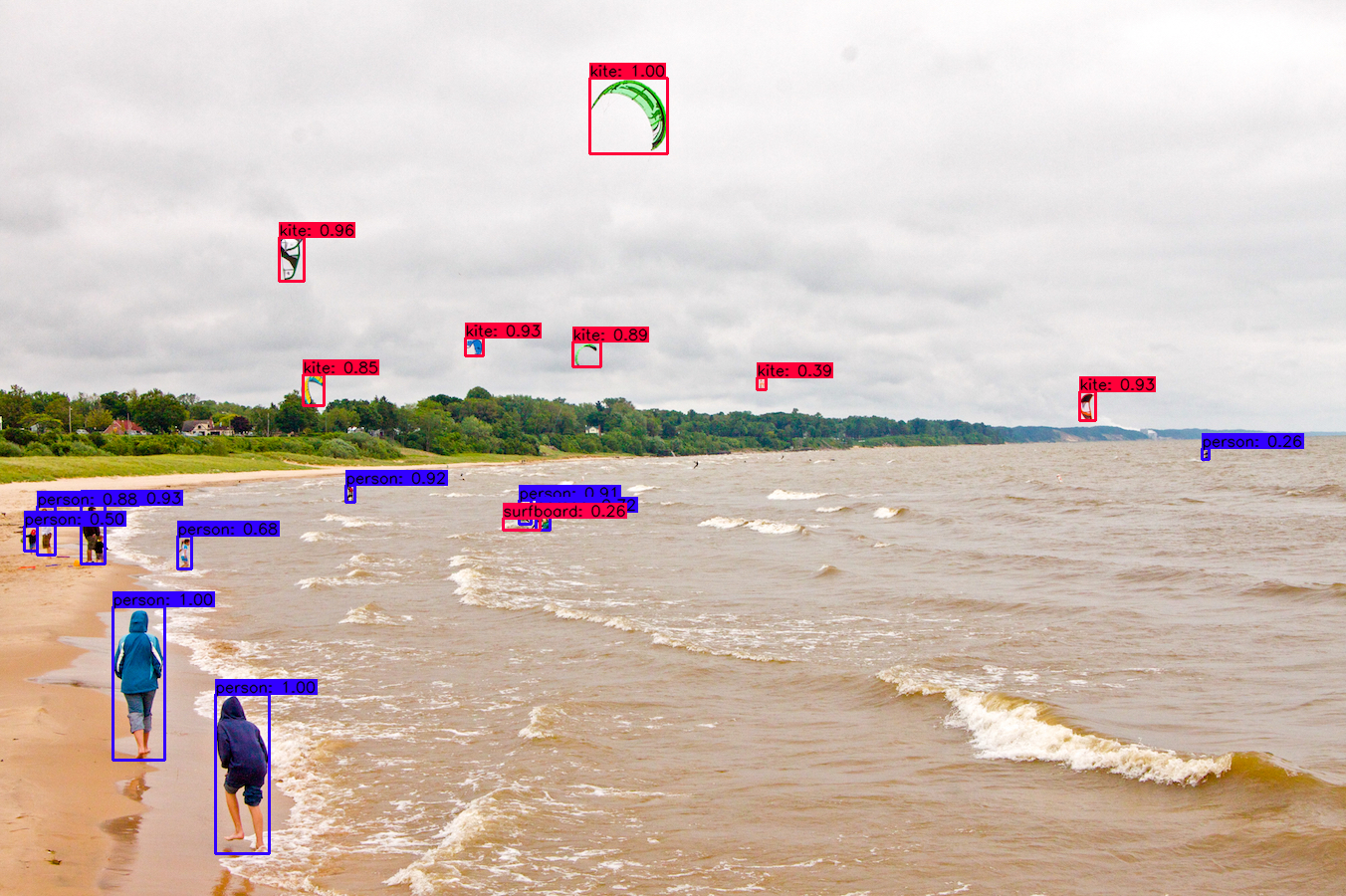

python detect.py --weights ./checkpoints/yolov4-416 --size 416 --model yolov4 --image ./data/kite.jpg

python detect.py --weights ./checkpoints/yolov4-tiny-416 --size 416 --model yolov4 --image ./data/kite.jpg --tiny

If you want to run yolov3 or yolov3-tiny change --model yolov3 in command

Output

Yolov4 original weight

Yolov4 tflite int8

Convert to tflite

# Save tf model for tflite converting

python save_model.py --weights ./data/yolov4.weights --output ./checkpoints/yolov4-416 --input_size 416 --model yolov4 --framework tflite

# yolov4

python convert_tflite.py --weights ./checkpoints/yolov4-416 --output ./checkpoints/yolov4-416.tflite

# yolov4 quantize float16

python convert_tflite.py --weights ./checkpoints/yolov4-416 --output ./checkpoints/yolov4-416-fp16.tflite --quantize_mode float16

# yolov4 quantize int8

python convert_tflite.py --weights ./checkpoints/yolov4-416 --output ./checkpoints/yolov4-416-int8.tflite --quantize_mode int8 --dataset ./coco_dataset/coco/val207.txt

# Run demo tflite model

python detect.py --weights ./checkpoints/yolov4-416.tflite --size 416 --model yolov4 --image ./data/kite.jpg --framework tflite

Yolov4 and Yolov4-tiny int8 quantization have some issues. I will try to fix that. You can try Yolov3 and Yolov3-tiny int8 quantization

Convert to TensorRT

python save_model.py --weights ./data/yolov3.weights --output ./checkpoints/yolov3.tf --input_size 416 --model yolov3

python convert_trt.py --weights ./checkpoints/yolov3.tf --quantize_mode float16 --output ./checkpoints/yolov3-trt-fp16-416

# yolov3-tiny

python save_model.py --weights ./data/yolov3-tiny.weights --output ./checkpoints/yolov3-tiny.tf --input_size 416 --tiny

python convert_trt.py --weights ./checkpoints/yolov3-tiny.tf --quantize_mode float16 --output ./checkpoints/yolov3-tiny-trt-fp16-416

# yolov4

python save_model.py --weights ./data/yolov4.weights --output ./checkpoints/yolov4.tf --input_size 416 --model yolov4

python convert_trt.py --weights ./checkpoints/yolov4.tf --quantize_mode float16 --output ./checkpoints/yolov4-trt-fp16-416

Evaluate on COCO 2017 Dataset

# run script in /script/get_coco_dataset_2017.sh to download COCO 2017 Dataset

# preprocess coco dataset

cd data

mkdir dataset

cd ..

cd scripts

python coco_convert.py --input ./coco/annotations/instances_val2017.json --output val2017.pkl

python coco_annotation.py --coco_path ./coco

cd ..

# evaluate yolov4 model

python evaluate.py --weights ./data/yolov4.weights

cd mAP/extra

python remove_space.py

cd ..

python main.py --output results_yolov4_tf

mAP50 on COCO 2017 Dataset

| Detection | 512x512 | 416x416 | 320x320 |

|---|---|---|---|

| YoloV3 | 55.43 | 52.32 | |

| YoloV4 | 61.96 | 57.33 |

Benchmark

python benchmarks.py --size 416 --model yolov4 --weights ./data/yolov4.weights

TensorRT performance

| YoloV4 416 images/s | FP32 | FP16 | INT8 |

|---|---|---|---|

| Batch size 1 | 55 | 116 | |

| Batch size 8 | 70 | 152 |

Tesla P100

| Detection | 512x512 | 416x416 | 320x320 |

|---|---|---|---|

| YoloV3 FPS | 40.6 | 49.4 | 61.3 |

| YoloV4 FPS | 33.4 | 41.7 | 50.0 |

Tesla K80

| Detection | 512x512 | 416x416 | 320x320 |

|---|---|---|---|

| YoloV3 FPS | 10.8 | 12.9 | 17.6 |

| YoloV4 FPS | 9.6 | 11.7 | 16.0 |

Tesla T4

| Detection | 512x512 | 416x416 | 320x320 |

|---|---|---|---|

| YoloV3 FPS | 27.6 | 32.3 | 45.1 |

| YoloV4 FPS | 24.0 | 30.3 | 40.1 |

Tesla P4

| Detection | 512x512 | 416x416 | 320x320 |

|---|---|---|---|

| YoloV3 FPS | 20.2 | 24.2 | 31.2 |

| YoloV4 FPS | 16.2 | 20.2 | 26.5 |

Macbook Pro 15 (2.3GHz i7)

| Detection | 512x512 | 416x416 | 320x320 |

|---|---|---|---|

| YoloV3 FPS | |||

| YoloV4 FPS |

Traning your own model

# Prepare your dataset

# If you want to train from scratch:

In config.py set FISRT_STAGE_EPOCHS=0

# Run script:

python train.py

# Transfer learning:

python train.py --weights ./data/yolov4.weights

The training performance is not fully reproduced yet, so I recommended to use Alex's Darknet to train your own data, then convert the .weights to tensorflow or tflite.

TODO

References

My project is inspired by these previous fantastic YOLOv3 implementations:

No Description

Text Python Java other