你确认删除该任务么?此任务一旦删除不可恢复。

|

|

1年前 | |

|---|---|---|

| .ipynb_checkpoints | 1年前 | |

| ascend310_infer | 1年前 | |

| data | 1年前 | |

| imgs | 1年前 | |

| kernel_meta | 1年前 | |

| outputs | 1年前 | |

| scripts | 1年前 | |

| src | 1年前 | |

| README.md | 1年前 | |

| akg_build_tmp.key | 1年前 | |

| dsa_transfrom.log | 1年前 | |

| eval.py | 1年前 | |

| eval_onnx.py | 1年前 | |

| export.py | 1年前 | |

| output.train.log | 1年前 | |

| postprocess.py | 1年前 | |

| preprocess.py | 1年前 | |

| requirements.txt | 1年前 | |

| scalar_transform.log | 1年前 | |

| train.py | 1年前 | |

README.md

Contents

- CycleGAN Description

- Model Architecture

- Dataset

- Environment Requirements

- Script Description

- Model Description

- ModelZoo Homepage

CycleGAN Description

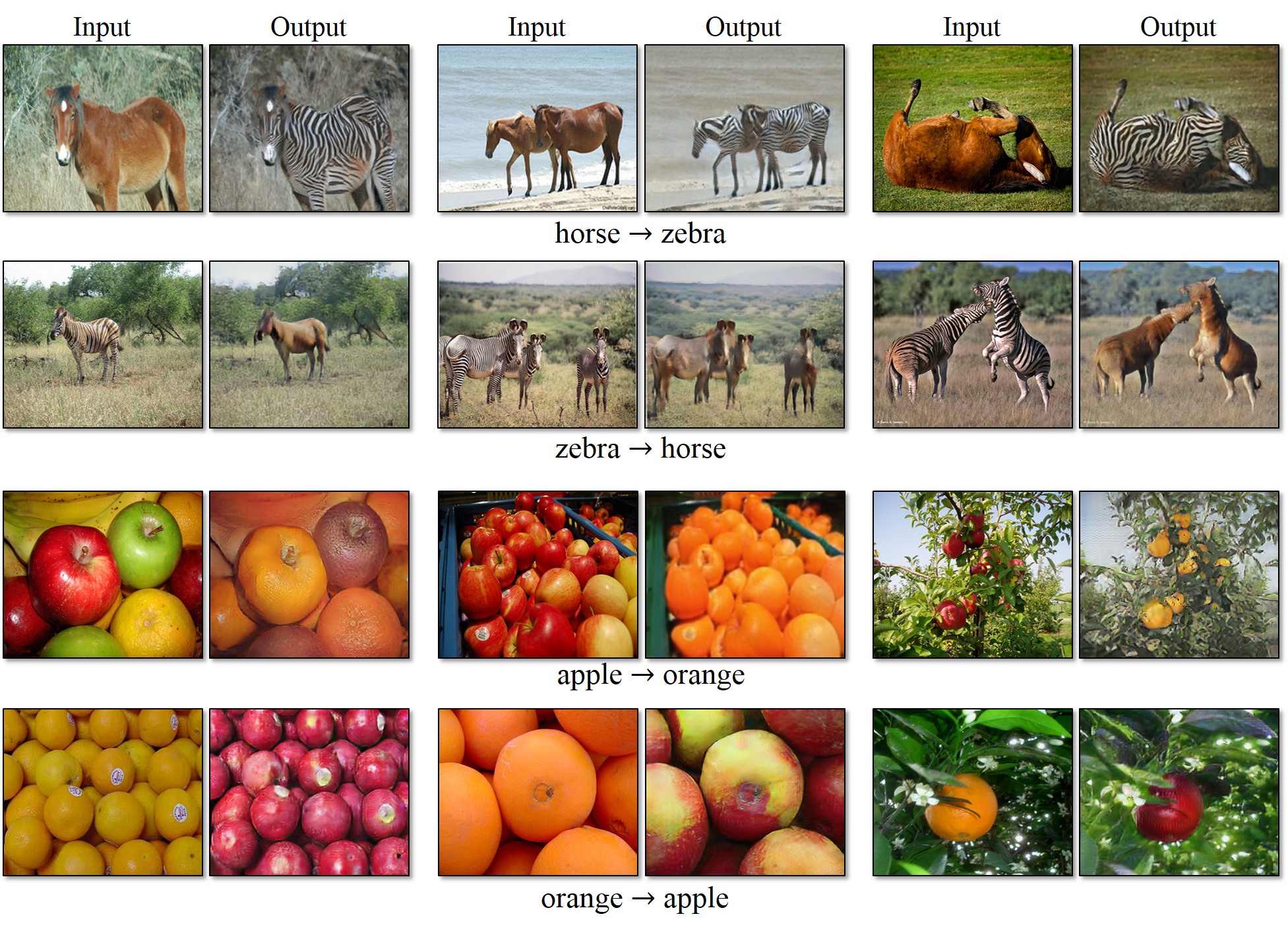

Image-to-image translation is a visual and image problem. Its goal is to use paired images as a training set and (let the machine) learn the mapping from input images to output images. However, in many tasks, paired training data cannot be obtained. CycleGAN does not require the training data to be paired. It only needs to provide images of different domains to successfully train the image mapping between different domains. CycleGAN shares two generators, and then each has a discriminator.

Paper: Zhu J Y , Park T , Isola P , et al. Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks[J]. 2017.

Model Architecture

The CycleGAN contains two generation networks and two discriminant networks.

Dataset

Download CycleGAN datasets and create your own datasets. We provide data/download_cyclegan_dataset.sh to download the datasets.

Environment Requirements

- Hardware(Ascend/GPU)

- Prepare hardware environment with Ascend or GPU processor.

- Framework

- For more information, please check the resources below:

Dependences

- Python==3.7.5

- Mindspore==1.2.0

Script Description

Script and Sample Code

The entire code structure is as following:

.CycleGAN

├─ README.md # descriptions about CycleGAN

├─ data

└─ download_cyclegan_dataset.sh.py # download dataset

├── scripts

└─ run_train_ascend.sh # launch ascend training(1 pcs)

└─ run_train_standalone_gpu.sh # launch gpu training(1 pcs)

└─ run_train_distributed_gpu.sh # launch gpu training(8 pcs)

└─ run_eval_ascend.sh # launch ascend eval

└─ run_eval_gpu.sh # launch gpu eval

└─ run_eval_onnx.sh # launch ONNX eval

└─ run_infer_310.sh # launch 310 infer

├─ imgs

└─ objects-transfiguration.jpg # CycleGAN Imgs

├─ ascend310_infer

├─ src

├─ main.cc # Ascend-310 inference source code

└─ utils.cc # Ascend-310 inference source code

├─ inc

└─ utils.h # Ascend-310 inference source code

├─ build.sh # Ascend-310 inference source code

├─ CMakeLists.txt # CMakeLists of Ascend-310 inference program

└─ fusion_switch.cfg # Use BatchNorm2d instead of InstanceNorm2d

├─ src

├─ __init__.py # init file

├─ dataset

├─ __init__.py # init file

├─ cyclegan_dataset.py # create cyclegan dataset

└─ distributed_sampler.py # iterator of dataset

├─ models

├─ __init__.py # init file

├─ cycle_gan.py # cyclegan model define

├─ losses.py # cyclegan losses function define

├─ networks.py # cyclegan sub networks define

├─ resnet.py # resnet generate network

└─ depth_resnet.py # better generate network

└─ utils

├─ __init__.py # init file

├─ args.py # parse args

├─ reporter.py # Reporter class

└─ tools.py # utils for cyclegan

├─ eval.py # generate images from A->B and B->A

├─ train.py # train script

├─ export.py # export mindir script

├─ preprocess.py # data preprocessing script for scend-310 inference

└─ postprocess.py # data post-processing script for scend-310 inference

Script Parameters

Major parameters in train.py and config.py as follows:

"platform": Ascend # run platform, only support GPU and Ascend.

"device_id": 0 # device id, default is 0.

"model": "resnet" # generator model.

"pool_size": 50 # the size of image buffer that stores previously generated images, default is 50.

"lr_policy": "linear" # learning rate policy, default is linear.

"image_size": 256 # input image_size, default is 256.

"batch_size": 1 # batch_size, default is 1.

"max_epoch": 200 # epoch size for training, default is 200.

"in_planes": 3 # input channels, default is 3.

"ngf": 64 # generator model filter numbers, default is 64.

"gl_num": 9 # generator model residual block numbers, default is 9.

"ndf": 64 # discriminator model filter numbers, default is 64.

"dl_num": 3 # discriminator model residual block numbers, default is 3.

"outputs_dir": "outputs" # models are saved here, default is ./outputs.

"dataroot": None # path of images (should have subfolders trainA, trainB, testA, testB, etc).

"load_ckpt": False # whether load pretrained ckpt.

"G_A_ckpt": None # pretrained checkpoint file path of G_A.

"G_B_ckpt": None # pretrained checkpoint file path of G_B.

"D_A_ckpt": None # pretrained checkpoint file path of D_A.

"D_B_ckpt": None # pretrained checkpoint file path of D_B.

Training

- running on Ascend with default parameters

sh ./scripts/run_train_ascend.sh

- running on GPU with default parameters

sh ./scripts/run_train_standalone_gpu.sh

- running on 8 GPUs with default parameters

sh ./scripts/run_train_distributed_gpu.sh

Evaluation

python eval.py --platform [PLATFORM] --dataroot [DATA_PATH] --G_A_ckpt [G_A_CKPT] --G_B_ckpt [G_B_CKPT]

Note: You will get the result as following in "./outputs_dir/predict".

ONNX evaluation

First, export your models:

python export.py --platform GPU --model ResNet --G_A_ckpt /path/to/GA.ckpt --G_B_ckpt /path/to/GB.ckpt --export_file_name /path/to/<prefix> --export_file_format ONNX

You will get two .onnx files: /path/to/<prefix>_AtoB.onnx and /path/to/<prefix>_BtoA.onnx

Next, run ONNX eval with the same export_file_name as in export:

python eval_onnx.py --platform [PLATFORM] --dataroot [DATA_PATH] --export_file_name /path/to/<prefix>

Note: You will get the result as following in "./outputs_dir/predict".

Inference Process

Export MindIR

python export.py --G_A_ckpt [CKPT_PATH_A] --G_B_ckpt [CKPT_PATH_A] --export_batch_size 1 --export_file_name [FILE_NAME] --export_file_format [FILE_FORMAT]

Infer on Ascend310

Before performing inference, the mindir file must be exported by export.py.Current batch_Size can only be set to 1.

# Ascend310 inference

bash ./scripts/run_infer_310.sh [MINDIR_PATH] [DATA_PATH] [DATA_MODE] [NEED_PREPROCESS] [DEVICE_TARGET] [DEVICE_ID]

DATA_PATHis mandatory, and must specify original data path.DATA_MODEis the translation direction of CycleGAN, it's value is 'AtoB' or 'BtoA'.NEED_PREPROCESSmeans weather need preprocess or not, it's value is 'y' or 'n'.DEVICE_IDis optional, default value is 0.

for example, on Ascend:

bash ./scripts/run_infer_310.sh ./310_infer/CycleGAN_AtoB.mindir ./data/horse2zebra AtoB y Ascend 0

Result

Inference result is saved in current path, you can find result in infer_output_img file.

Model Description

Performance

Training Performance

We use Depth Resnet Generator on Ascend and Resnet Generator on GPU.

| Parameters | single Ascend/GPU | 8 GPUs |

|---|---|---|

| Model Version | CycleGAN | CycleGAN |

| Resource | Ascend 910/NV SMX2 V100-32G | NV SMX2 V100-32G x 8 |

| MindSpore Version | 1.2 | 1.2 |

| Dataset | horse2zebra | horse2zebra |

| Training Parameters | epoch=200, steps=1334, batch_size=1, lr=0.0002 | epoch=600, steps=166, batch_size=8, lr=0.0002 |

| Optimizer | Adam | Adam |

| Loss Function | Mean Sqare Loss & L1 Loss | Mean Sqare Loss & L1 Loss |

| outputs | probability | probability |

| Speed | 1pc(Ascend): 123 ms/step; 1pc(GPU): 190 ms/step | 190 ms/step |

| Total time | 1pc(Ascend): 9.6h; 1pc(GPU): 14.9h; | 5.7h |

| Checkpoint for Fine tuning | 44M (.ckpt file) | 44M (.ckpt file) |

Evaluation Performance

| Parameters | single Ascend/GPU |

|---|---|

| Model Version | CycleGAN |

| Resource | Ascend 910/NV SMX2 V100-32G |

| MindSpore Version | 1.2 |

| Dataset | horse2zebra |

| batch_size | 1 |

| outputs | transferred images |

ModelZoo Homepage

Please check the official homepage.