bmshj2018

《Variational image compression with a scale hyperprior》

key words:image compression, variational autoencoder, scale hyperprior

bmshj proposes an end-to-end trainable model for image compression based on variational autoencoders. It performs better than artificial neural networks based methods in compressing images on visual quality measurements like MS-SSIM and PSNR.

our contibutions

1.translate from pytorch to mindspore

2.successfully run forward, backward and parameter update

3.test and compare between the mindspore version and the pytorch version

file structure

the translated model file is compressai/models/google_mindspore.py

environment

- mindspore-dev==2.0.0dev20230116

- ubuntu 16.04

- cuda 11.1

- python 3.7

command

please intall compressai via

pip install -e .

under dictionary CompressAI_MindSpore.

to use the translated model, check

from compressai.zoo import bmshj2018_factorized, bmshj2018_hyperprior

model = bmshj2018_factorized(quality=2, pretrained=False)

model = bmshj2018_hyperprior(quality=2, pretrained=False)

test

please check demo.ipynb to know the use of the models, and the forward and backward and parameter update

or

use test_ms.py to test mindspore model

1.pip install -e .

2.python test_ms.py

use test_torch.py to test pytorch model

1.change dictionary 'compressai' to 'compressai1'

2.pip install compressai

3.python test_torch.py

comparision

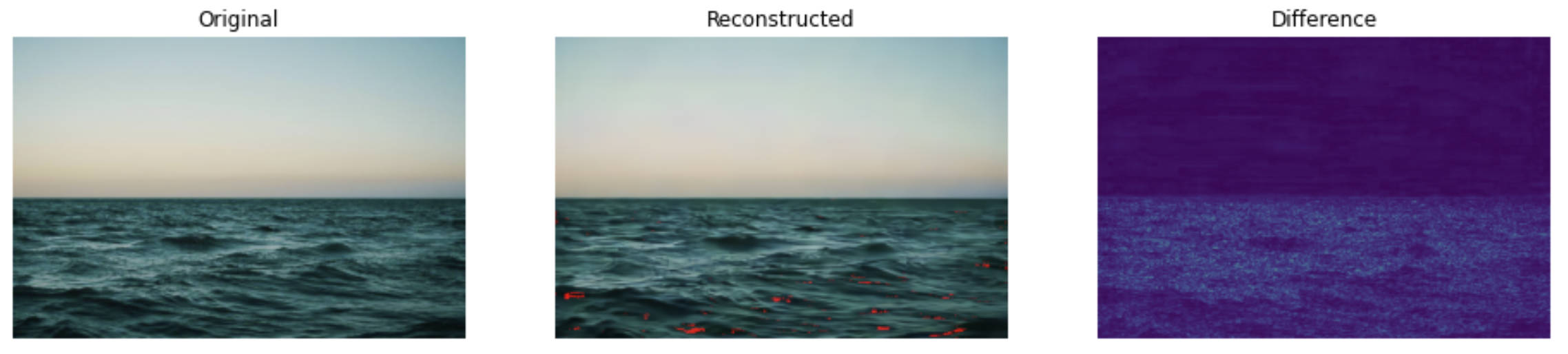

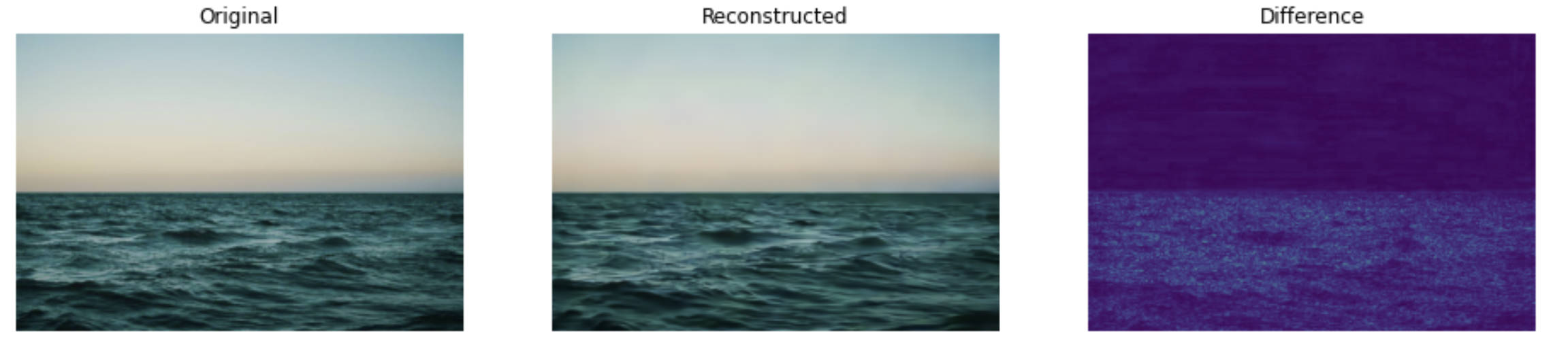

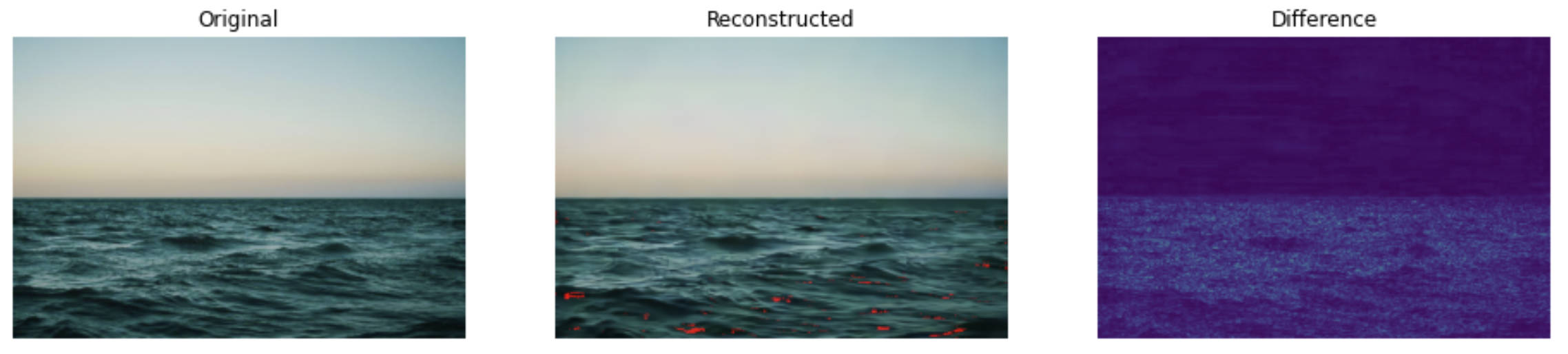

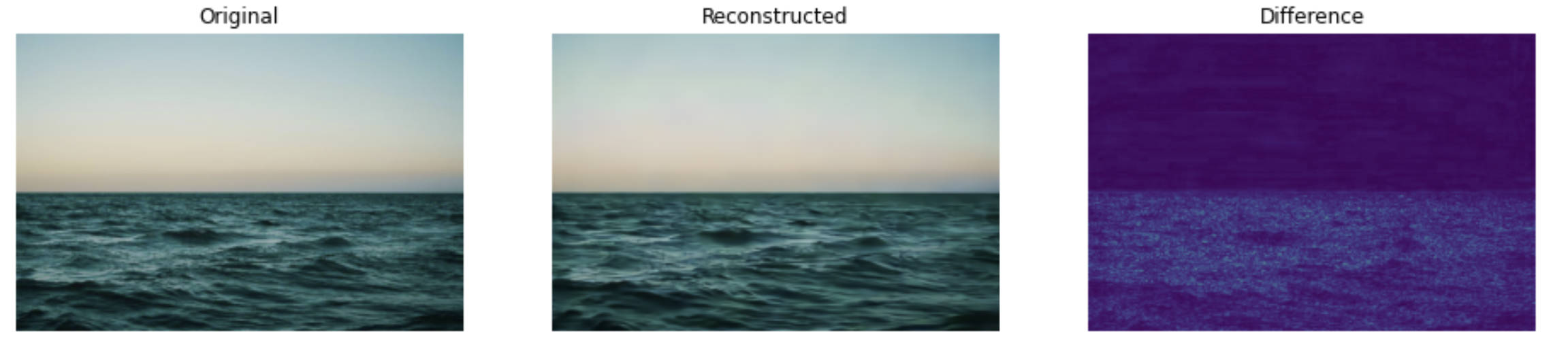

reconstructed image

mindspore version

pytorch version

quality measurements (bmshj2018_hyperprior)

The results of different bitrate models on div2k test dataset:

| qp |

bpp |

PSNR |

MSSSIM |

time_cost |

GPU M |

| 1 |

0.296 |

27.582 |

0.917 |

6.212 |

4004 |

| 2 |

0.372 |

29.197 |

0.942 |

3.505 |

4004 |

| 5 |

0.822 |

34.526 |

0.984 |

3.462 |

4004 |

| 7 |

1.493 |

38.584 |

0.993 |

5.111 |

4004 |

source

translated from pytorch

link:InterDigitalInc/CompressAI: A PyTorch library and evaluation platform for end-to-end compression research (github.com)

citation

@article{balle2018variational,

title={Variational image compression with a scale hyperprior},

author={Ball{\'e}, Johannes and Minnen, David and Singh, Saurabh and Hwang, Sung Jin and Johnston, Nick},

journal={arXiv preprint arXiv:1802.01436},

year={2018}

}

contributors

name: Kaiyu Zheng, Yongchi Zhang