pcc_attr_folding

key words: point cloud, attribute compression, neural network

pcc_attr_folding, is a kind of point cloud attribute lossy compression method, based on deeplearning. The origional code uses tensorflow as deeplearning framework, here transplants to tensorlayer.

our contributions

1.transplant from tensorflow to tensorlayer

2.test with testsets, on tensorflow and tensorlayer

3.sort code files, and list the architecture, and make explanations to the method and paper

4.ablation test on encoded vector length, 128 VS 256

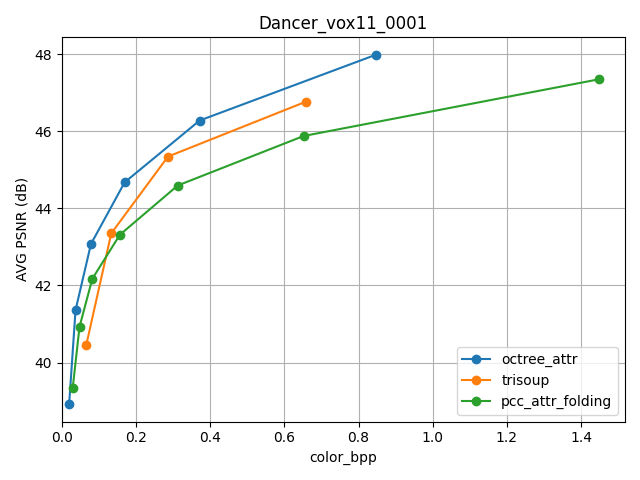

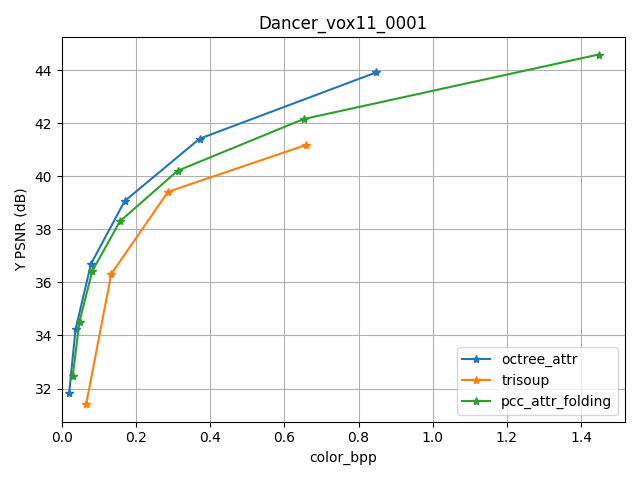

5.test with Dancer_vox11_0001.ply, to compare pcc_attr_folding with octree and trisoup

file structure

root

└── gpcc: gpcc coding result

└── gpcc_test: gpcc codec program

└── manual_patches_tf_LocalTestResult: local test on original code with redandblack_vox10_1550

└── manual_patches_tl: test on tensorlayer with redandblack_vox10_1550

└── tensorflow src code by author

└── src_tensorlayer: code in tensorlayer after migration

└── FOLDING-BASED COMPRESSION OF POINT CLOUD ATTRIBUTES.pdf: origional paper

└── libbpg-0.9.8.tar.gz: bpg installation pakage for picture codec

└── pcc_attr_folding_explanation.docx: introduction for paper, migration, performance, instructions

└── requirements.txt: modules to run the code

└── code file architecture.vsdx: indicates the relationship between all files

environment

refer to requirements.txt and src/README.md, as well as pcc_attr_folding_explanation.docx

command

train and compress on tensorlayer:

python 61_run_folding.py 91_expdata_full.yml /userhome/pcc_attr_folding/pcc_attr_folding-master/manual_patches_tl/

gpcc codec:

python 50_run_mpeg.py 91_expdata_full.yml ../gpcc

compare result of gpcc and pcc_attr_folding:

python 70_run_eval_compare.py 91_expdata_full.yml ../gpcc/ ../manual_patches_tl/

ablation test

In the process of geometry coding, the model will encode the pointcloud into a vector containing 128 numbers. We first think that 128 is too small to carry enough features, so we make an ablation test to change 128 to 256, to see if the distortion PSNR will be better.

The result shows below. From the result below, we compare the bpp and Y,U,V PSNR of 128 and 256. And we found that there is no apparent changes, and sometimes the performance even becomes worse for 256. So changing the encoded vector length is not a good optimization.

| input_pc |

rate |

n_points_ori |

color_bpp(256) |

y_psnr(256) |

u_psnr(256) |

v_psnr(256) |

color_bpp(128) |

y_psnr(128) |

u_psnr(128) |

v_psnr(128) |

| dancer_vox11_00000001 |

refined_opt_qp_20 |

2592758 |

1.406 |

44.367 |

46.8 |

50.443 |

1.447 |

44.592 |

46.854 |

50.595 |

| dancer_vox11_00000001 |

refined_opt_qp_25 |

2592758 |

0.631 |

42.014 |

45.874 |

49.41 |

0.652 |

42.159 |

45.928 |

49.544 |

| dancer_vox11_00000001 |

refined_opt_qp_30 |

2592758 |

0.304 |

40.075 |

44.974 |

48.342 |

0.312 |

40.213 |

45.093 |

48.473 |

| dancer_vox11_00000001 |

refined_opt_qp_35 |

2592758 |

0.154 |

38.115 |

44.087 |

47.095 |

0.156 |

38.302 |

44.248 |

47.393 |

| dancer_vox11_00000001 |

refined_opt_qp_40 |

2592758 |

0.081 |

36.196 |

43.476 |

46.027 |

0.082 |

36.43 |

43.688 |

46.402 |

| dancer_vox11_00000001 |

refined_opt_qp_45 |

2592758 |

0.047 |

34.327 |

42.81 |

44.99 |

0.047 |

34.526 |

42.904 |

45.347 |

| dancer_vox11_00000001 |

refined_opt_qp_50 |

2592758 |

0.029 |

32.237 |

41.678 |

43.183 |

0.029 |

32.468 |

41.949 |

43.586 |

| longdress_vox10_1300 |

refined_opt_qp_20 |

857966 |

5.614 |

41.632 |

40.111 |

38.561 |

5.441 |

41.571 |

40.316 |

38.695 |

| longdress_vox10_1300 |

refined_opt_qp_25 |

857966 |

3.536 |

38.851 |

38.938 |

37.653 |

3.406 |

38.795 |

39.059 |

37.744 |

| longdress_vox10_1300 |

refined_opt_qp_30 |

857966 |

1.923 |

35.272 |

37.249 |

36.213 |

1.841 |

35.221 |

37.287 |

36.266 |

| longdress_vox10_1300 |

refined_opt_qp_35 |

857966 |

0.91 |

31.871 |

35.638 |

34.714 |

0.877 |

31.926 |

35.626 |

34.686 |

| longdress_vox10_1300 |

refined_opt_qp_40 |

857966 |

0.418 |

29.284 |

34.365 |

33.475 |

0.414 |

29.379 |

34.338 |

33.422 |

| longdress_vox10_1300 |

refined_opt_qp_45 |

857966 |

0.209 |

27.308 |

33.09 |

32.144 |

0.209 |

27.376 |

33.106 |

32.111 |

| longdress_vox10_1300 |

refined_opt_qp_50 |

857966 |

0.103 |

25.454 |

31.606 |

30.597 |

0.104 |

25.44 |

31.538 |

30.475 |

| loot_vox10_1200 |

refined_opt_qp_20 |

805285 |

1.615 |

44.281 |

50.906 |

50.737 |

1.489 |

43.448 |

50.822 |

50.52 |

| loot_vox10_1200 |

refined_opt_qp_25 |

805285 |

0.825 |

41.437 |

49.494 |

49.388 |

0.769 |

41.048 |

49.492 |

49.123 |

| loot_vox10_1200 |

refined_opt_qp_30 |

805285 |

0.381 |

38.647 |

48.228 |

48.046 |

0.361 |

38.448 |

48.066 |

47.627 |

| loot_vox10_1200 |

refined_opt_qp_35 |

805285 |

0.179 |

36.4 |

46.908 |

46.556 |

0.169 |

36.241 |

46.749 |

46.389 |

| loot_vox10_1200 |

refined_opt_qp_40 |

805285 |

0.087 |

34.41 |

45.451 |

45.372 |

0.081 |

34.223 |

45.605 |

45.064 |

| loot_vox10_1200 |

refined_opt_qp_45 |

805285 |

0.044 |

32.594 |

44.027 |

43.34 |

0.041 |

32.376 |

43.63 |

42.998 |

| loot_vox10_1200 |

refined_opt_qp_50 |

805285 |

0.024 |

30.98 |

42.124 |

42.274 |

0.023 |

30.851 |

42.244 |

41.44 |

| queen_vox10_0200 |

refined_opt_qp_20 |

1000994 |

2.15 |

35.182 |

37.27 |

34.339 |

2.093 |

38.025 |

38.447 |

36.032 |

| queen_vox10_0200 |

refined_opt_qp_25 |

1000994 |

1.279 |

34.714 |

36.924 |

34.178 |

1.221 |

37.182 |

38.126 |

35.856 |

| queen_vox10_0200 |

refined_opt_qp_30 |

1000994 |

0.764 |

33.946 |

36.317 |

33.786 |

0.727 |

35.967 |

37.54 |

35.49 |

| queen_vox10_0200 |

refined_opt_qp_35 |

1000994 |

0.44 |

32.579 |

35.443 |

33.193 |

0.423 |

34.123 |

36.606 |

34.867 |

| queen_vox10_0200 |

refined_opt_qp_40 |

1000994 |

0.234 |

30.319 |

34.661 |

32.339 |

0.233 |

31.587 |

35.817 |

34.081 |

| queen_vox10_0200 |

refined_opt_qp_45 |

1000994 |

0.1 |

27.182 |

33.924 |

31.24 |

0.105 |

28.156 |

35.126 |

32.808 |

| queen_vox10_0200 |

refined_opt_qp_50 |

1000994 |

0.035 |

24.442 |

33.209 |

30.19 |

0.039 |

25.382 |

34.163 |

31.266 |

| redandblack_vox10_1550 |

refined_opt_qp_20 |

757691 |

2.191 |

41.006 |

43.92 |

36.953 |

2.403 |

42.83 |

44.504 |

38.559 |

| redandblack_vox10_1550 |

refined_opt_qp_25 |

757691 |

1.241 |

39.37 |

42.62 |

36.393 |

1.315 |

40.614 |

43.111 |

37.79 |

| redandblack_vox10_1550 |

refined_opt_qp_30 |

757691 |

0.614 |

37.084 |

41.114 |

35.253 |

0.638 |

38.041 |

41.599 |

36.429 |

| redandblack_vox10_1550 |

refined_opt_qp_35 |

757691 |

0.301 |

34.955 |

39.885 |

33.877 |

0.326 |

35.911 |

40.35 |

34.847 |

| redandblack_vox10_1550 |

refined_opt_qp_40 |

757691 |

0.158 |

33.136 |

39.127 |

32.508 |

0.175 |

33.978 |

39.46 |

33.274 |

| redandblack_vox10_1550 |

refined_opt_qp_45 |

757691 |

0.089 |

31.359 |

38.346 |

31.096 |

0.101 |

32.213 |

38.792 |

31.83 |

| redandblack_vox10_1550 |

refined_opt_qp_50 |

757691 |

0.049 |

29.718 |

37.416 |

29.233 |

0.054 |

30.392 |

37.524 |

29.757 |

| soldier_vox10_0690 |

refined_opt_qp_20 |

1089091 |

2.993 |

42.627 |

50.037 |

50.526 |

2.808 |

40.593 |

49.897 |

50.326 |

| soldier_vox10_0690 |

refined_opt_qp_25 |

1089091 |

1.629 |

39.859 |

48.405 |

48.727 |

1.514 |

38.689 |

48.264 |

48.589 |

| soldier_vox10_0690 |

refined_opt_qp_30 |

1089091 |

0.786 |

36.919 |

46.61 |

46.985 |

0.73 |

36.31 |

46.438 |

46.767 |

| soldier_vox10_0690 |

refined_opt_qp_35 |

1089091 |

0.381 |

34.47 |

45.318 |

45.631 |

0.36 |

34.099 |

45.153 |

45.294 |

| soldier_vox10_0690 |

refined_opt_qp_40 |

1089091 |

0.193 |

32.347 |

44.185 |

44.607 |

0.181 |

32.004 |

44.131 |

44.402 |

| soldier_vox10_0690 |

refined_opt_qp_45 |

1089091 |

0.096 |

30.255 |

43.192 |

43.348 |

0.091 |

30 |

42.82 |

43.274 |

| soldier_vox10_0690 |

refined_opt_qp_50 |

1089091 |

0.048 |

28.155 |

40.93 |

40.547 |

0.045 |

27.976 |

41.41 |

40.823 |

performance

- test on redandblack_vox10_1550.ply, with mode refined_opt, we list the result from different sources.

| source |

bpg compression para(qp) |

color bpp |

Y PSNR |

U PSNR |

V PSNR |

| paper |

20 |

0.1-2.3 |

31-44 |

not mentioned |

not mentioned |

| TensorFlow-GPU |

20 |

2.44 |

43.59 |

44.58 |

38.25 |

| Tensorlayer-GPU |

20 |

2.43 |

44.52 |

44.69 |

38.88 |

| TensorFlow-GPU |

50 |

0.066 |

30.67 |

37.8 |

29.79 |

| Tensorlayer-GPU |

50 |

0.066 |

30.74 |

37.77 |

29.99 |

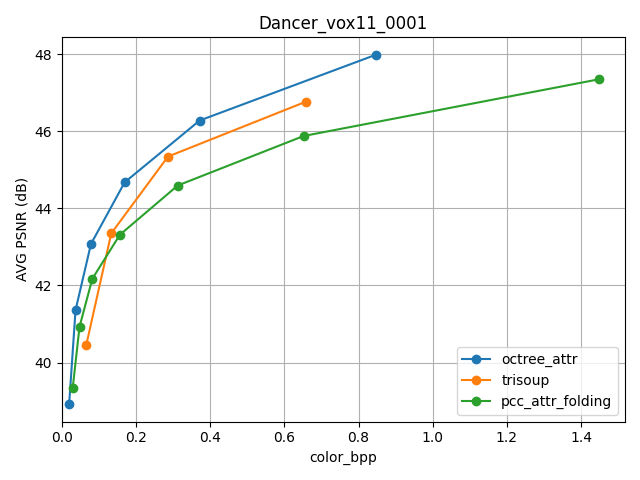

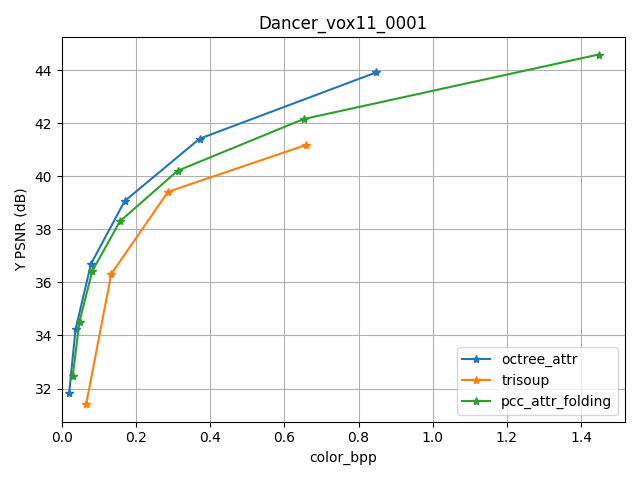

- test on Dancer_vox11_0001.ply, and draw graphs to intuitively show the performance of pcc_attr_folding, compared with octree and trisoup. From the figures below, we can clearly see that, octree has the best performance, and for average PSNR, trisoup is better than pcc_attr_folding, while for Y PSNR, pcc_attr_folding is better.

- test on testsets, with mode refined_opt, we compared the performances of TensorFlow and TensorLayer. From the table below, we can see that the YUV of TL is slightly bigger than TF, as the bpp of TL is also bigger. So the performances of TL and TF are close.

| input_pc |

rate |

TL_color_bpp |

TL_y_psnr |

TL_u_psnr |

TL_v_psnr |

TF_color_bpp |

TF_y_psnr |

TF_u_psnr |

TF_v_psnr |

| dancer_vox11_00000001 |

refined_opt_qp_20 |

1.543 |

44.854 |

46.929 |

50.616 |

1.447 |

44.592 |

46.854 |

50.595 |

| dancer_vox11_00000001 |

refined_opt_qp_25 |

0.69 |

42.343 |

46.002 |

49.607 |

0.652 |

42.159 |

45.928 |

49.544 |

| dancer_vox11_00000001 |

refined_opt_qp_30 |

0.329 |

40.338 |

45.091 |

48.508 |

0.312 |

40.213 |

45.093 |

48.473 |

| dancer_vox11_00000001 |

refined_opt_qp_35 |

0.164 |

38.397 |

44.209 |

47.237 |

0.156 |

38.302 |

44.248 |

47.393 |

| dancer_vox11_00000001 |

refined_opt_qp_40 |

0.085 |

36.499 |

43.548 |

46.391 |

0.082 |

36.43 |

43.688 |

46.402 |

| dancer_vox11_00000001 |

refined_opt_qp_45 |

0.05 |

34.62 |

42.989 |

45.196 |

0.047 |

34.526 |

42.904 |

45.347 |

| dancer_vox11_00000001 |

refined_opt_qp_50 |

0.031 |

32.575 |

42.037 |

43.391 |

0.029 |

32.468 |

41.949 |

43.586 |

| longdress_vox10_1300 |

refined_opt_qp_20 |

5.513 |

41.938 |

40.39 |

38.93 |

5.441 |

41.571 |

40.316 |

38.695 |

| longdress_vox10_1300 |

refined_opt_qp_25 |

3.414 |

39.018 |

39.175 |

37.961 |

3.406 |

38.795 |

39.059 |

37.744 |

| longdress_vox10_1300 |

refined_opt_qp_30 |

1.842 |

35.439 |

37.413 |

36.459 |

1.841 |

35.221 |

37.287 |

36.266 |

| longdress_vox10_1300 |

refined_opt_qp_35 |

0.888 |

32.123 |

35.73 |

34.957 |

0.877 |

31.926 |

35.626 |

34.686 |

| longdress_vox10_1300 |

refined_opt_qp_40 |

0.426 |

29.545 |

34.471 |

33.619 |

0.414 |

29.379 |

34.338 |

33.422 |

| longdress_vox10_1300 |

refined_opt_qp_45 |

0.217 |

27.507 |

33.245 |

32.362 |

0.209 |

27.376 |

33.106 |

32.111 |

| longdress_vox10_1300 |

refined_opt_qp_50 |

0.106 |

25.554 |

31.693 |

30.733 |

0.104 |

25.44 |

31.538 |

30.475 |

| loot_vox10_1200 |

refined_opt_qp_20 |

1.648 |

44.959 |

51.089 |

50.934 |

1.489 |

43.448 |

50.822 |

50.52 |

| loot_vox10_1200 |

refined_opt_qp_25 |

0.845 |

42.029 |

49.707 |

49.611 |

0.769 |

41.048 |

49.492 |

49.123 |

| loot_vox10_1200 |

refined_opt_qp_30 |

0.4 |

39.169 |

48.357 |

48.245 |

0.361 |

38.448 |

48.066 |

47.627 |

| loot_vox10_1200 |

refined_opt_qp_35 |

0.193 |

36.869 |

46.961 |

46.627 |

0.169 |

36.241 |

46.749 |

46.389 |

| loot_vox10_1200 |

refined_opt_qp_40 |

0.096 |

34.776 |

45.735 |

45.387 |

0.081 |

34.223 |

45.605 |

45.064 |

| loot_vox10_1200 |

refined_opt_qp_45 |

0.047 |

32.775 |

43.959 |

43.332 |

0.041 |

32.376 |

43.63 |

42.998 |

| loot_vox10_1200 |

refined_opt_qp_50 |

0.027 |

31.151 |

42.399 |

42.108 |

0.023 |

30.851 |

42.244 |

41.44 |

| phil_vox10_0139 |

refined_opt_qp_20 |

2.437 |

39.855 |

42.875 |

41.598 |

2.509 |

40.643 |

42.795 |

41.519 |

| phil_vox10_0139 |

refined_opt_qp_25 |

1.266 |

38.191 |

41.864 |

40.659 |

1.311 |

38.683 |

41.815 |

40.598 |

| phil_vox10_0139 |

refined_opt_qp_30 |

0.574 |

36.034 |

40.711 |

39.471 |

0.592 |

36.287 |

40.618 |

39.438 |

| phil_vox10_0139 |

refined_opt_qp_35 |

0.268 |

34.152 |

39.565 |

38.232 |

0.279 |

34.326 |

39.536 |

38.304 |

| phil_vox10_0139 |

refined_opt_qp_40 |

0.139 |

32.499 |

38.743 |

37.032 |

0.145 |

32.654 |

38.74 |

37.212 |

| phil_vox10_0139 |

refined_opt_qp_45 |

0.077 |

30.839 |

38.058 |

35.908 |

0.078 |

30.903 |

37.917 |

36.028 |

| phil_vox10_0139 |

refined_opt_qp_50 |

0.043 |

28.928 |

36.894 |

34.903 |

0.043 |

29.067 |

36.975 |

34.826 |

| phil_vox9_0139 |

refined_opt_qp_20 |

4.583 |

39.202 |

41.978 |

39.835 |

3.669 |

36.017 |

41.605 |

39.489 |

| phil_vox9_0139 |

refined_opt_qp_25 |

2.741 |

37.439 |

41.047 |

38.902 |

2.215 |

35.07 |

40.688 |

38.571 |

| phil_vox9_0139 |

refined_opt_qp_30 |

1.392 |

34.603 |

39.878 |

37.65 |

1.13 |

33.171 |

39.567 |

37.325 |

| phil_vox9_0139 |

refined_opt_qp_35 |

0.601 |

31.726 |

38.85 |

36.642 |

0.474 |

30.83 |

38.549 |

36.268 |

| phil_vox9_0139 |

refined_opt_qp_40 |

0.282 |

29.702 |

38.201 |

35.91 |

0.226 |

29.149 |

37.911 |

35.816 |

| phil_vox9_0139 |

refined_opt_qp_45 |

0.142 |

27.867 |

37.609 |

35.547 |

0.114 |

27.457 |

37.341 |

35.473 |

| phil_vox9_0139 |

refined_opt_qp_50 |

0.071 |

26.031 |

36.421 |

35.066 |

0.058 |

25.629 |

36.26 |

34.887 |

| queen_vox10_0200 |

refined_opt_qp_20 |

2.223 |

39.783 |

39.7 |

37.466 |

2.093 |

38.025 |

38.447 |

36.032 |

| queen_vox10_0200 |

refined_opt_qp_25 |

1.302 |

38.616 |

39.341 |

37.262 |

1.221 |

37.182 |

38.126 |

35.856 |

| queen_vox10_0200 |

refined_opt_qp_30 |

0.777 |

37.026 |

38.727 |

36.861 |

0.727 |

35.967 |

37.54 |

35.49 |

| queen_vox10_0200 |

refined_opt_qp_35 |

0.46 |

34.959 |

37.854 |

36.262 |

0.423 |

34.123 |

36.606 |

34.867 |

| queen_vox10_0200 |

refined_opt_qp_40 |

0.256 |

32.244 |

36.882 |

35.509 |

0.233 |

31.587 |

35.817 |

34.081 |

| queen_vox10_0200 |

refined_opt_qp_45 |

0.12 |

29.056 |

35.79 |

34.16 |

0.105 |

28.156 |

35.126 |

32.808 |

| queen_vox10_0200 |

refined_opt_qp_50 |

0.046 |

26.189 |

34.627 |

32.518 |

0.039 |

25.382 |

34.163 |

31.266 |

| redandblack_vox10_1550 |

refined_opt_qp_20 |

2.365 |

43.611 |

44.611 |

38.438 |

2.403 |

42.83 |

44.504 |

38.559 |

| redandblack_vox10_1550 |

refined_opt_qp_25 |

1.286 |

41.102 |

43.15 |

37.695 |

1.315 |

40.614 |

43.111 |

37.79 |

| redandblack_vox10_1550 |

refined_opt_qp_30 |

0.628 |

38.37 |

41.618 |

36.369 |

0.638 |

38.041 |

41.599 |

36.429 |

| redandblack_vox10_1550 |

refined_opt_qp_35 |

0.315 |

36.115 |

40.399 |

34.887 |

0.326 |

35.911 |

40.35 |

34.847 |

| redandblack_vox10_1550 |

refined_opt_qp_40 |

0.173 |

34.256 |

39.377 |

33.428 |

0.175 |

33.978 |

39.46 |

33.274 |

| redandblack_vox10_1550 |

refined_opt_qp_45 |

0.1 |

32.387 |

38.692 |

31.858 |

0.101 |

32.213 |

38.792 |

31.83 |

| redandblack_vox10_1550 |

refined_opt_qp_50 |

0.054 |

30.549 |

37.746 |

29.8 |

0.054 |

30.392 |

37.524 |

29.757 |

| sarah_vox10_0023 |

refined_opt_qp_20 |

0.605 |

44.907 |

49.338 |

47.64 |

0.638 |

44.159 |

49.087 |

47.064 |

| sarah_vox10_0023 |

refined_opt_qp_25 |

0.312 |

43.551 |

48.381 |

46.812 |

0.33 |

42.974 |

48.107 |

46.275 |

| sarah_vox10_0023 |

refined_opt_qp_30 |

0.165 |

41.985 |

47.29 |

45.867 |

0.173 |

41.526 |

47.016 |

45.185 |

| sarah_vox10_0023 |

refined_opt_qp_35 |

0.09 |

40.289 |

46.023 |

44.542 |

0.094 |

39.884 |

45.731 |

43.971 |

| sarah_vox10_0023 |

refined_opt_qp_40 |

0.052 |

38.476 |

45.165 |

43.586 |

0.055 |

38.032 |

44.69 |

43.093 |

| sarah_vox10_0023 |

refined_opt_qp_45 |

0.033 |

36.795 |

43.761 |

42.468 |

0.033 |

36.176 |

43.671 |

41.949 |

| sarah_vox10_0023 |

refined_opt_qp_50 |

0.023 |

34.876 |

42.001 |

40.687 |

0.023 |

34.347 |

42.107 |

40.585 |

| sarah_vox9_0023 |

refined_opt_qp_20 |

1.357 |

46.025 |

48.019 |

46.327 |

1.159 |

44.09 |

47.688 |

45.937 |

| sarah_vox9_0023 |

refined_opt_qp_25 |

0.721 |

43.437 |

47.058 |

45.495 |

0.617 |

42.158 |

46.749 |

45.116 |

| sarah_vox9_0023 |

refined_opt_qp_30 |

0.376 |

40.939 |

45.832 |

44.318 |

0.321 |

40.077 |

45.482 |

43.929 |

| sarah_vox9_0023 |

refined_opt_qp_35 |

0.195 |

38.555 |

44.39 |

43.113 |

0.166 |

37.96 |

44.092 |

42.691 |

| sarah_vox9_0023 |

refined_opt_qp_40 |

0.106 |

36.523 |

43.53 |

42.153 |

0.092 |

36 |

42.915 |

41.318 |

| sarah_vox9_0023 |

refined_opt_qp_45 |

0.061 |

34.608 |

41.938 |

40.97 |

0.054 |

34.134 |

41.807 |

40.346 |

| sarah_vox9_0023 |

refined_opt_qp_50 |

0.038 |

32.528 |

40.242 |

39.021 |

0.034 |

32.101 |

39.407 |

38.723 |

| soldier_vox10_0690 |

refined_opt_qp_20 |

3.166 |

43.835 |

50.246 |

50.746 |

2.808 |

40.593 |

49.897 |

50.326 |

| soldier_vox10_0690 |

refined_opt_qp_25 |

1.696 |

40.566 |

48.594 |

48.926 |

1.514 |

38.689 |

48.264 |

48.589 |

| soldier_vox10_0690 |

refined_opt_qp_30 |

0.805 |

37.416 |

46.854 |

47.259 |

0.73 |

36.31 |

46.438 |

46.767 |

| soldier_vox10_0690 |

refined_opt_qp_35 |

0.397 |

34.958 |

45.625 |

45.816 |

0.36 |

34.099 |

45.153 |

45.294 |

| soldier_vox10_0690 |

refined_opt_qp_40 |

0.202 |

32.766 |

44.517 |

44.569 |

0.181 |

32.004 |

44.131 |

44.402 |

| soldier_vox10_0690 |

refined_opt_qp_45 |

0.103 |

30.618 |

43.281 |

43.264 |

0.091 |

30 |

42.82 |

43.274 |

| soldier_vox10_0690 |

refined_opt_qp_50 |

0.051 |

28.468 |

41.27 |

40.498 |

0.045 |

27.976 |

41.41 |

40.823 |

Cite from:

@article{DBLP:journals/corr/abs-2002-04439,

author = {Maurice Quach and

Giuseppe Valenzise and

Fr{'{e}}d{'{e}}ric Dufaux},

title = {Folding-based compression of point cloud attributes},

journal = {CoRR},

volume = {abs/2002.04439},

year = {2020},

url = {https://arxiv.org/abs/2002.04439},

archivePrefix = {arXiv},

eprint = {2002.04439},

timestamp = {Wed, 12 Feb 2020 16:38:55 +0100},

biburl = {https://dblp.org/rec/journals/corr/abs-2002-04439.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

contributors

name: Ye Hua

email: yeh@pcl.ac.cn