Title: A Neural Transition-based Model for Argumentation Mining

Introduce

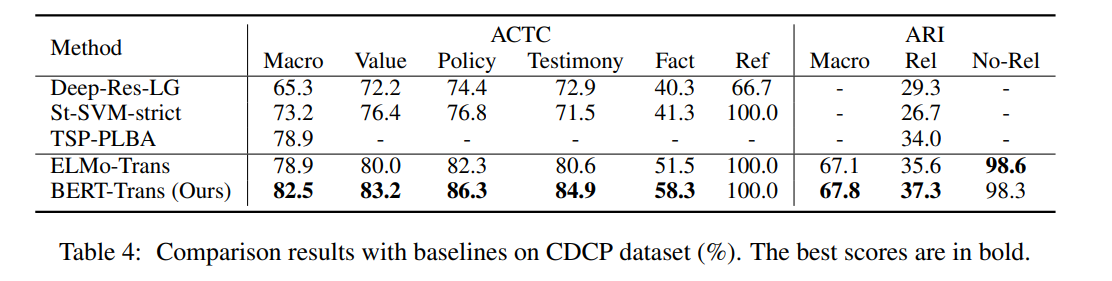

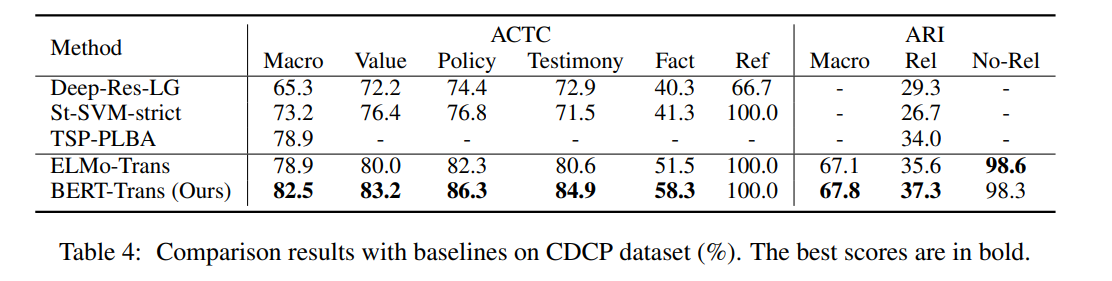

The goal of argumentation mining is to automatically extract argumentation structures from argumentative texts. Most existing methods determine argumentative relations by exhaustively enumerating all possible pairs of argument components, which suffer from low efficiency and class imbalance. Moreover, due to the complex nature of argumentation, there is, so far, no universal method that can address both tree and non-tree structured argumentation. Towards these issues, we propose a neural transition-based model for argumentation mining, which incrementally builds an argumentation graph by generating a sequence of actions, avoiding inefficient enumeration operations. Furthermore, our model can handle both tree and non-tree structured argumentation without introducing any structural constraints. Experimental results show that our model achieves the best performance on two public datasets of different structures.

Model

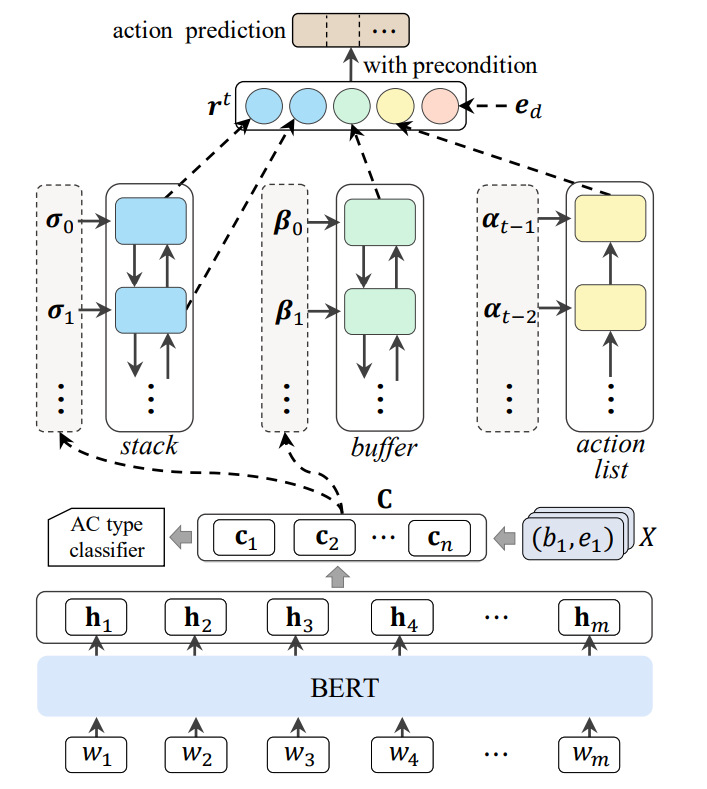

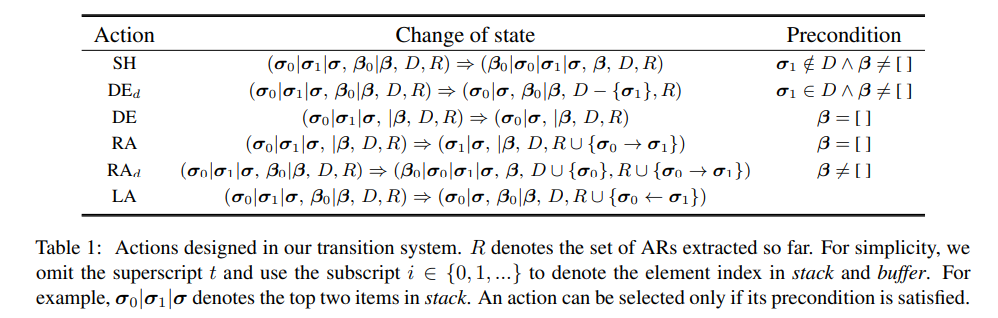

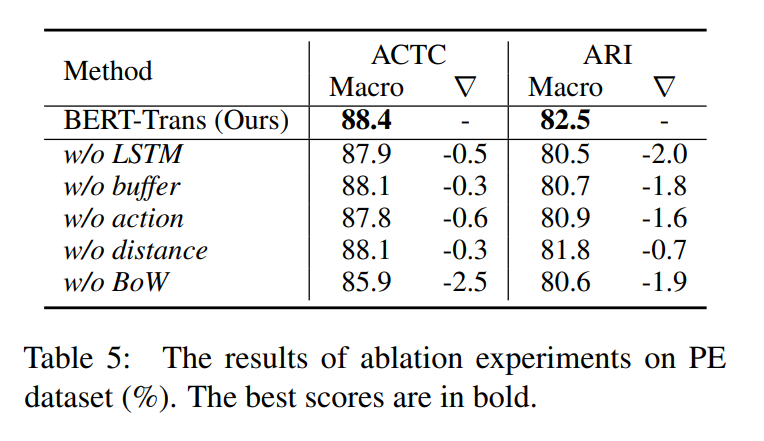

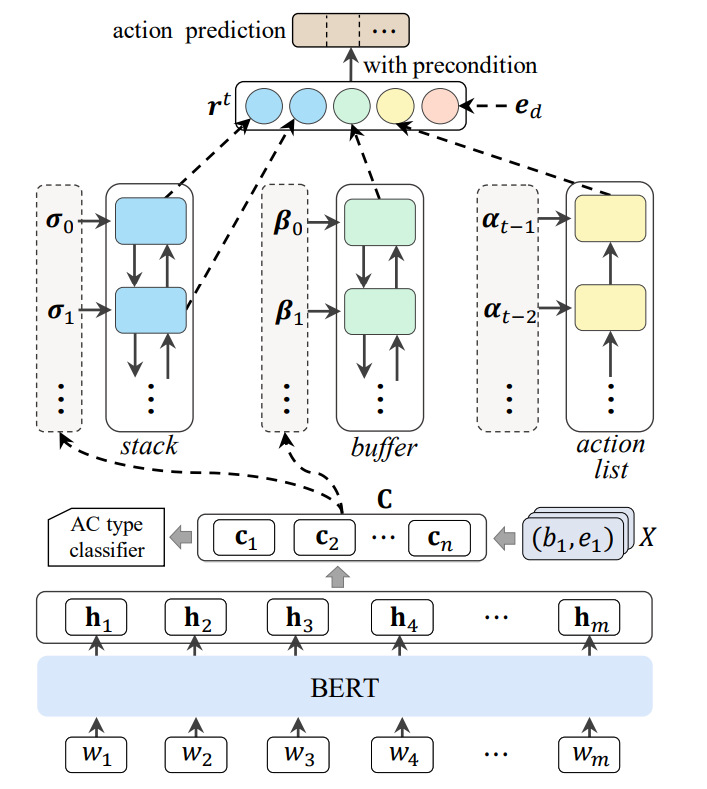

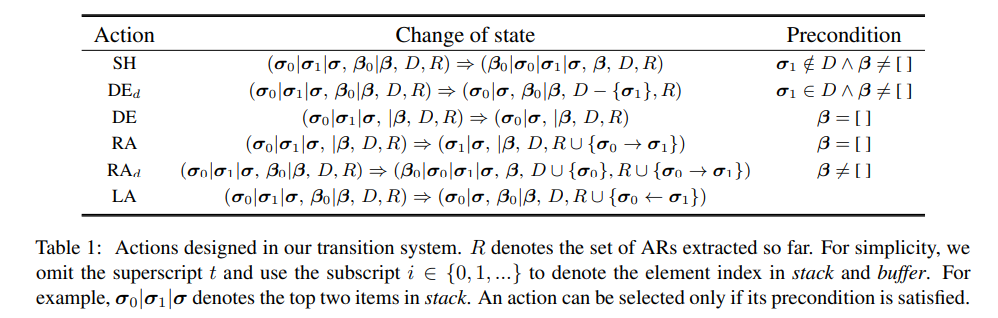

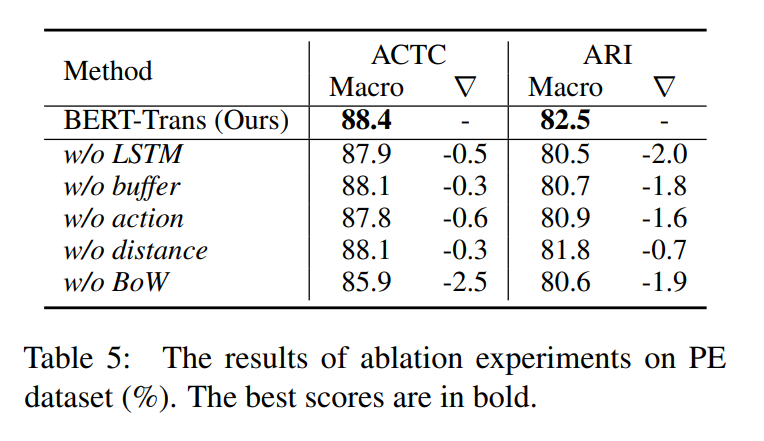

We present a neural transition-based model for Argumentation mining (AM), which can jointly learn Argument component type classification (ACTC) and Argumentative relation identification (ARI). Our model generates a sequence of actions in terms of the parser state to incrementally build an argumentation graph.

- We utilize BERT and LSTM to represent our parser state, which contains a stack σ to store processed argument components (ACs), a buffer β to store unprocessed ACs, a delay set D to record ACs that need to be removed subsequently, and an action list α to record historical actions.

- Then, the learning problem is framed as: given the parser state of current step t: ($σ^{t}$ , $β^{t}$ , $D^{t}$ , $α^{t}$), predict an action to determine the parser state of the next step, and simultaneously identify ARs according to the predicted action.

Figure shows the architecture of our model.

Experimental Results

Installation and Environment Configuration

python 3.6

pytorch 1.7.1

cuda 10.2

transformers 4.2.1

json 2.0.9

numpy 1.19.4

pandas 1.1.5

scikit-learn 0.24.0

tqdm 4.54.1

argparse 1.1

Instructions for Use

- data - contains dataset.

bert-base-uncased: put the download Pytorch bert model here (config.json, pytorch_model.bin, vocab.txt).

- saved_models - contains saved models, training logs and results.

- utils - utils code.

config.py: parameter setting.evaluation.py: evaluation procedure.dataloader.py - load train, dev, test data.metrics.py: evaluation metrics.trans_module.py: proposed transition-based model.transitions.py: transform text into transitions.

load_text_essays.py: load PE dataset.prepare_data.py - preprocess the input data.run.py - train and evaluate the proposed transition-based model.preprocess.sh - prepare data and models.

bash preprocess.sh

python run.py

Reference

@inproceedings{

title = "A Neural Transition-based Model for Argumentation Mining",

author = "Bao, Jianzhu and Fan, Chuang and Wu, Jipeng and Dang, Yixue and Du, Jiachen and Xu, Ruifeng",

booktitle = "Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers)",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2021.acl-long.497",

doi = "10.18653/v1/2021.acl-long.497",

pages = "6354--6364",

}