Are you sure you want to delete this task? Once this task is deleted, it cannot be recovered.

|

|

2 years ago | |

|---|---|---|

| config | 2 years ago | |

| configs | 2 years ago | |

| datasets | 2 years ago | |

| loss | 2 years ago | |

| model | 2 years ago | |

| processor | 2 years ago | |

| solver | 2 years ago | |

| thop | 2 years ago | |

| utils | 2 years ago | |

| LICENSE | 2 years ago | |

| README.md | 2 years ago | |

| train.py | 2 years ago | |

| vis.py | 2 years ago | |

README.md

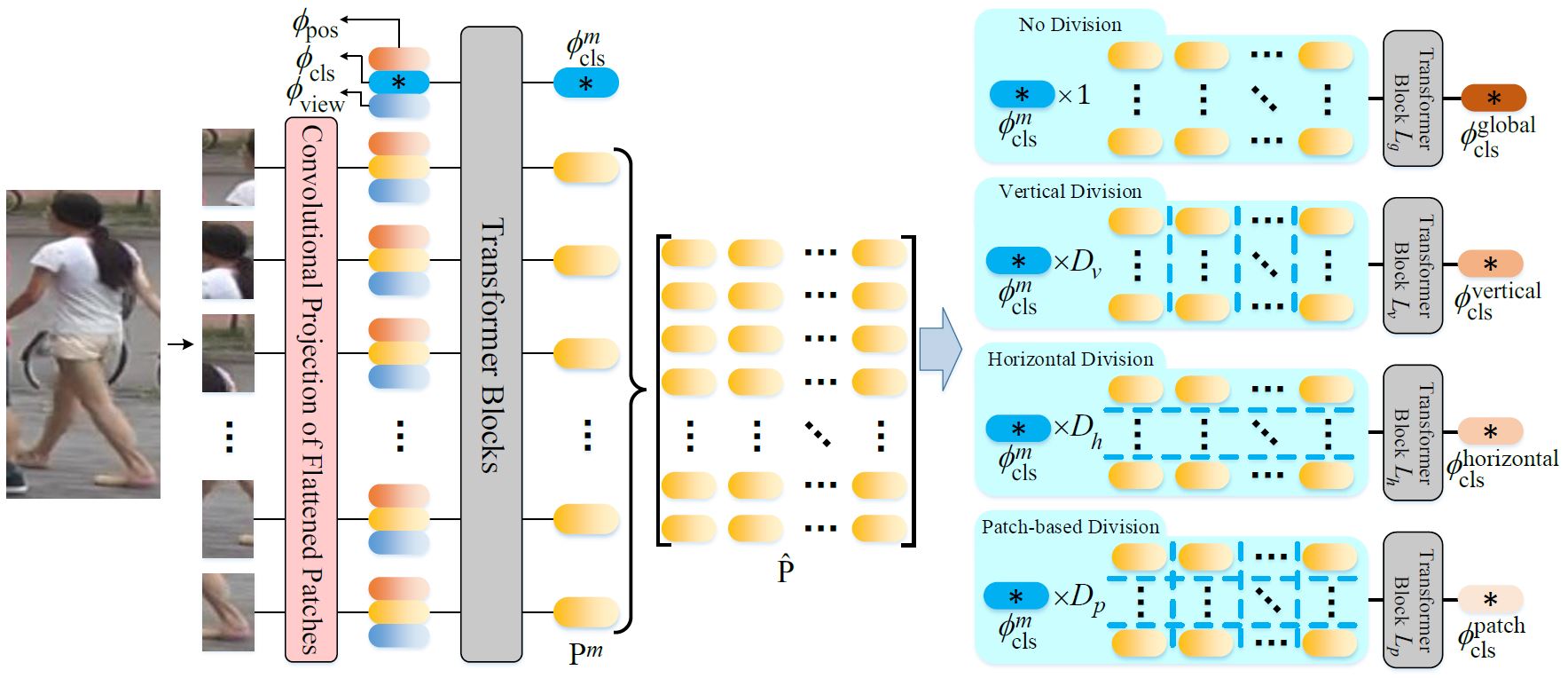

Multi-direction and Multi-scale Pyramid in Transformer for Video-based Pedestrian Retrieval

Implementation of the proposed PiT. For the preprint version, please refer to [Arxiv].

Getting Started

Requirements

Here is a brief instruction for installing the experimental environment.

# install virtual envs

$ conda create -n PiT python=3.6 -y

$ conda activate PiT

# install pytorch 1.8.1/1.6.0 (other versions may also work)

$ pip install timm scipy einops yacs opencv-python tensorboard pandas

Download pre-trained model

The pre-trained vit model can be downloaded in this link and should be put in the /home/[USER]/.cache/torch/checkpoints/ directory.

Dataset Preparation

For iLIDS-VID, please refer to this issue.

Training and Testing

# This command below includes the training and testing processes.

$ python train.py --config_file configs/MARS/pit.yml MODEL.DEVICE_ID "('0')"

# For testing only, the parameter TEST.WEIGHT in yml file should be the directory of model weights. Otherwise, it should be None.

Results in the Paper

The results of MARS and iLIDS-VID are trained using one 24G NVIDIA GPU and provided below. You can change the parameter DATALOADER.P in yml file to decrease the GPU memory cost.

| Model | Rank-1@MARS | Rank-1@iLIDS-VID |

|---|---|---|

| PiT | 90.22 (code:wqxv) | 92.07 (code: quci) |

You can download these models and put them in the ../logs/[DATASET]_PiT_1x210_3x70_105x2_6p directory. Then use the command below to evaluate them.

$ python test.py --config_file configs/MARS/pit.yml MODEL.DEVICE_ID "('0')"

Acknowledgement

This repository is built upon the repository TranReID.

Citation

If you find this project useful for your research, please kindly cite:

@ARTICLE{9714137,

author={Zang, Xianghao and Li, Ge and Gao, Wei},

journal={IEEE Transactions on Industrial Informatics},

title={Multi-direction and Multi-scale Pyramid in Transformer for Video-based Pedestrian Retrieval},

year={2022},

volume={},

number={},

pages={1-1},

doi={10.1109/TII.2022.3151766}

}

License

This repository is released under the GPL-2.0 License as found in the LICENSE file.

Multi-direction and Multi-scale Pyramid in Transformer for Video-based Pedestrian Retrieval.

Python