Are you sure you want to delete this task? Once this task is deleted, it cannot be recovered.

|

|

1 year ago | |

|---|---|---|

| 3409335.ipynb | 1 year ago | |

| README.md | 1 year ago | |

| output_17_1.png | 1 year ago | |

README.md

一、山东土地集团杯-遥感图像语义分割

比赛地址:https://www.heywhale.com/home/competition/61c95b5dc4437e0017d5feea/content/0?from=notice

1.大赛背景

滨州市按照中央和省相关要求,以智慧城市建设为统领,切实推进数据资源整合,创新开展各类数据应用,建成滨州市公共数据开放网站,实现54个部门单位、7个县(市、区)共11592个目录,约4亿余条数据依法依规向社会开放,覆盖经济、科技、教育、卫生、文化、体育等20余个主题。

本次滨州市“山东土地集团杯”数据应用创新创业大赛旨在进一步提升滨州市大数据应用范围和效果,提高部门和社会大众对大数据的理解和认识,发掘大数据优秀案例和人才,加快数据要素资源与产业发展融合,推动赛事成果转化,培育发展数据产业,推动产数融合,为经济社会发展提供数据支撑,服务经济提质增效升级,真正做到数据取之于民、造福于民,努力实现数据开放、数据应用、产业发展三位一体的目标。

地物要素分类是地表第五要素观测与测绘的重要手段之一,然而目前地物要素的提取方法主要依赖人工,效率低且成本高昂,急需通过先进的算法提高精度并使其自动化。充分运用智能算法与大数据技术突破遥感影像的信息提取与分析瓶颈,不仅是业务端的迫切需要,更是一个企业在数据时代打造数字化业务的重要标杆。

基于赛事官方提供的数据及建模分析平台,参赛者需要对光学遥感图像中各类光谱信息和空间信息进行分析,将遥感图像进行土地类型语义分割处理,为图像中具有语义信息的各个像元赋予语义类别标签。

2.数据描述

此次算法赛采用了1.5 万+遥感影像语义分割样本数据,遥感数据为GF1-WFV拍摄的山东滨州附近地区的影像,预处理过程为正射校正、配准、裁剪。分类目标是山东省土地利用类型,经过处理合并得到以下六类:耕地、林地、草地、水域、城乡、工矿、居民用地及未利用土地。

原始影像数据格式为 tif(000001_GF.tif),包含红绿蓝(RGB) 三个波段,影像尺寸为 256*256

标注文件格式为 tif(000001_LT.tif),每个像素的标签值由 1~6 表示:

| 类别 | 类别标签 |

|---|---|

| 耕地 | 1 |

| 林地 | 2 |

| 草地 | 3 |

| 水域 | 4 |

| 城乡、工矿、居民用地 | 5 |

| 未利用土地 | 6 |

3.数据下载

| 数据名称 | 数据描述 | 下载链接(成功报名后可下载) | 开放时间 |

|---|---|---|---|

| 提交样例 | 「results.zip」:提交样例文件,输出值为随机结果,共参赛人员参考 | 点击下载 | 2021-12-31中午 12:00 |

| 初赛训练集 | 5,000 原始影像和标注文件 | 点击下载 | 2021-12-31中午 12:00 |

| 初赛A榜测试集 | 2,000 原始影像 | 点击下载 | 2021-12-31中午 12:00 |

| 初赛B榜测试集 | 1,000 原始影像 | 点击下载 | 2022-1-27中午 12:00 |

| 复赛相关数据 | 训练集、A榜测试集和B榜测试集 | 赛事页面↗前往组织-工作台-查看数据源,新建项目通过“添加数据源”选项挂载使用数据 | 2022-2-15 中午12:00 |

二、环境准备

本项目采用paddleseg套件实现了遥感影像地块的分割,paddleseg版本号为v2.3版本

1.gdal安装

该库反复琢磨,只能conda安装,

# !mkdir /home/aistudio/external-libraries

!conda install gdal --prefix=/home/aistudio/external-libraries -y

Collecting package metadata (current_repodata.json): failed

# >>>>>>>>>>>>>>>>>>>>>> ERROR REPORT <<<<<<<<<<<<<<<<<<<<<<

Traceback (most recent call last):

File "/opt/conda/lib/python3.6/site-packages/conda/gateways/connection/session.py", line 60, in __call__

return cls._thread_local.session

AttributeError: '_thread._local' object has no attribute 'session'

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/opt/conda/lib/python3.6/site-packages/conda/exceptions.py", line 1079, in __call__

return func(*args, **kwargs)

File "/opt/conda/lib/python3.6/site-packages/conda/cli/main.py", line 84, in _main

exit_code = do_call(args, p)

File "/opt/conda/lib/python3.6/site-packages/conda/cli/conda_argparse.py", line 83, in do_call

return getattr(module, func_name)(args, parser)

File "/opt/conda/lib/python3.6/site-packages/conda/cli/main_install.py", line 20, in execute

install(args, parser, 'install')

File "/opt/conda/lib/python3.6/site-packages/conda/cli/install.py", line 265, in install

should_retry_solve=(_should_retry_unfrozen or repodata_fn != repodata_fns[-1]),

File "/opt/conda/lib/python3.6/site-packages/conda/core/solve.py", line 117, in solve_for_transaction

should_retry_solve)

File "/opt/conda/lib/python3.6/site-packages/conda/core/solve.py", line 158, in solve_for_diff

force_remove, should_retry_solve)

File "/opt/conda/lib/python3.6/site-packages/conda/core/solve.py", line 262, in solve_final_state

ssc = self._collect_all_metadata(ssc)

File "/opt/conda/lib/python3.6/site-packages/conda/common/io.py", line 88, in decorated

return f(*args, **kwds)

File "/opt/conda/lib/python3.6/site-packages/conda/core/solve.py", line 425, in _collect_all_metadata

index, r = self._prepare(prepared_specs)

File "/opt/conda/lib/python3.6/site-packages/conda/core/solve.py", line 1021, in _prepare

self.subdirs, prepared_specs, self._repodata_fn)

File "/opt/conda/lib/python3.6/site-packages/conda/core/index.py", line 289, in get_reduced_index

repodata_fn=repodata_fn)

File "/opt/conda/lib/python3.6/site-packages/conda/core/subdir_data.py", line 140, in query_all

result = tuple(concat(executor.map(subdir_query, channel_urls)))

File "/opt/conda/lib/python3.6/concurrent/futures/_base.py", line 586, in result_iterator

yield fs.pop().result()

File "/opt/conda/lib/python3.6/concurrent/futures/_base.py", line 432, in result

return self.__get_result()

File "/opt/conda/lib/python3.6/concurrent/futures/_base.py", line 384, in __get_result

raise self._exception

File "/opt/conda/lib/python3.6/concurrent/futures/thread.py", line 56, in run

result = self.fn(*self.args, **self.kwargs)

File "/opt/conda/lib/python3.6/site-packages/conda/core/subdir_data.py", line 133, in <lambda>

package_ref_or_match_spec))

File "/opt/conda/lib/python3.6/site-packages/conda/core/subdir_data.py", line 145, in query

self.load()

File "/opt/conda/lib/python3.6/site-packages/conda/core/subdir_data.py", line 209, in load

_internal_state = self._load()

File "/opt/conda/lib/python3.6/site-packages/conda/core/subdir_data.py", line 375, in _load

repodata_fn=self.repodata_fn)

File "/opt/conda/lib/python3.6/site-packages/conda/core/subdir_data.py", line 680, in fetch_repodata_remote_request

session = CondaSession()

File "/opt/conda/lib/python3.6/site-packages/conda/gateways/connection/session.py", line 62, in __call__

session = cls._thread_local.session = super(CondaSessionType, cls).__call__()

File "/opt/conda/lib/python3.6/site-packages/conda/gateways/connection/session.py", line 88, in __init__

raise_on_status=False)

TypeError: __init__() got an unexpected keyword argument 'raise_on_status'

`$ /opt/conda/bin/conda install gdal --prefix=/home/aistudio/external-libraries -y`

environment variables:

CIO_TEST=<not set>

CONDA_ROOT=/opt/conda

CURL_CA_BUNDLE=<not set>

IDE_HUB_PROXY_1_SERVICE_PORT_PROXY=<set>

LD_LIBRARY_PATH=/usr/lib/x86_64-linux-gnu:/usr/local/cuda/lib64

LIBRARY_PATH=/usr/local/cuda/lib64/stubs

PATH=/home/aistudio/.data/webide/pip/bin:/opt/conda/envs/python35-paddle120

-env/bin:/opt/conda/bin:/usr/local/nvidia/bin:/usr/local/cuda/bin:/usr

/local/sbin:/usr/sbin:/sbin:/home/work/bin:/home/work/.local/bin:/usr/

local/bin:/usr/bin:/bin

PYTHONUSERBASE=/home/aistudio/.data/webide/pip

REQUESTS_CA_BUNDLE=<not set>

SSL_CERT_FILE=<not set>

WEBIDE_USER_PYTHON_PATH=/opt/conda/envs/python35-paddle120-env/bin

active environment : None

user config file : /home/aistudio/.condarc

populated config files : /home/aistudio/.condarc

conda version : 4.10.1

conda-build version : not installed

python version : 3.6.8.final.0

virtual packages : __linux=4.13.0=0

__glibc=2.23=0

__unix=0=0

__archspec=1=x86_64

base environment : /opt/conda (writable)

conda av data dir : /opt/conda/etc/conda

conda av metadata url : https://repo.anaconda.com/pkgs/main

channel URLs : https://conda.anaconda.org/conda-forge/linux-64

https://conda.anaconda.org/conda-forge/noarch

https://repo.anaconda.com/pkgs/main/linux-64

https://repo.anaconda.com/pkgs/main/noarch

https://repo.anaconda.com/pkgs/r/linux-64

https://repo.anaconda.com/pkgs/r/noarch

package cache : /opt/conda/pkgs

/home/aistudio/.conda/pkgs

envs directories : /opt/conda/envs

/home/aistudio/.conda/envs

platform : linux-64

user-agent : conda/4.10.1 requests/2.22.0 CPython/3.6.8 Linux/4.13.0-36-generic ubuntu/16.04.6 glibc/2.23

UID:GID : 1000:1000

netrc file : None

offline mode : False

An unexpected error has occurred. Conda has prepared the above report.

Upload successful.

import sys

import cv2

import glob

import os

import numpy as np

from osgeo import gdal

1.PaddleSeg下载

# 下载paddleseg

%cd ~

!git clone -b release/2.3 https://gitee.com/paddlepaddle/PaddleSeg.git --depth=1

2.PaddleSeg安装

%cd ~/PaddleSeg/

!pip install -U pip

!pip install -r requirements.txt

!pip install -e .

三、 数据准备

1.解压缩数据

%cd ~

# 解压训练数据集到work目录下

!unzip -oaq -d /home/aistudio/train_dataset data/data125219/初赛训练集.zip

# 解压测试数据集到work目录下

!unzip -oaq -d /home/aistudio/test_dataset data/data125219/初赛A榜_GF.zip

/home/aistudio

2.划分出训练集和验证集文件列表

# 生成文件列表文件

%cd ~

import os

import numpy as np

DATA_ROOT_DIR = '/home/aistudio/train_dataset/'

def make_list():

img_list = [img.split('.')[0].strip('_GF') for img in os.listdir(os.path.join(DATA_ROOT_DIR, '初赛训练_GF'))]

data_path_list = []

for image_id in img_list:

image_path = os.path.join(DATA_ROOT_DIR, '初赛训练_GF', f"{image_id}_GF.tif")

label_path = os.path.join(DATA_ROOT_DIR, '初赛训练_LT', f"{image_id}_LT.tif")

data_path_list.append((image_path, label_path))

np.random.seed(5)

np.random.shuffle(data_path_list)

total_len = len(data_path_list)

train_data_len = int(total_len*0.8)

train_data = data_path_list[0 : train_data_len]

val_data = data_path_list[train_data_len : ]

with open(os.path.join(DATA_ROOT_DIR, 'train_list.txt'), "w") as f:

for image, label in train_data:

f.write(f"{image} {label}\n")

with open(os.path.join(DATA_ROOT_DIR, 'val_list.txt'), "w") as f:

for image, label in val_data:

f.write(f"{image} {label}\n")

if __name__ == '__main__':

make_list()

/home/aistudio

3.标签修改

由于原始label图像是从 1开始编码,不符合PaddleSeg要修,故进行修改。

# 修改label从0开始起算

import numpy as np

from PIL import Image

def convert_img_lab(img_path):

img=Image.open(img_path).convert("L")

img=np.array(img)

# 修改label从0开始起算

# 惯性思维用循环了,多谢嘟嘟赐教

img=img-1

# for i in range(256):

# for j in range(256):

# img[i][j]=img[i][j]-1

img=Image.fromarray(img.astype('uint8'))

img.save(img_path,quality=95)

# 开始修改label

%cd ~

for file in os.listdir('/home/aistudio/train_dataset/初赛训练_LT/'):

convert_img_lab(os.path.join('/home/aistudio/train_dataset/初赛训练_LT/', file))

%cd ~

!head -n 10 train_dataset/train_list.txt

/home/aistudio

/home/aistudio/train_dataset/初赛训练_GF/003938_GF.tif /home/aistudio/train_dataset/初赛训练_LT/003938_LT.tif

/home/aistudio/train_dataset/初赛训练_GF/009635_GF.tif /home/aistudio/train_dataset/初赛训练_LT/009635_LT.tif

/home/aistudio/train_dataset/初赛训练_GF/002443_GF.tif /home/aistudio/train_dataset/初赛训练_LT/002443_LT.tif

/home/aistudio/train_dataset/初赛训练_GF/010992_GF.tif /home/aistudio/train_dataset/初赛训练_LT/010992_LT.tif

/home/aistudio/train_dataset/初赛训练_GF/004967_GF.tif /home/aistudio/train_dataset/初赛训练_LT/004967_LT.tif

/home/aistudio/train_dataset/初赛训练_GF/011362_GF.tif /home/aistudio/train_dataset/初赛训练_LT/011362_LT.tif

/home/aistudio/train_dataset/初赛训练_GF/001391_GF.tif /home/aistudio/train_dataset/初赛训练_LT/001391_LT.tif

/home/aistudio/train_dataset/初赛训练_GF/003704_GF.tif /home/aistudio/train_dataset/初赛训练_LT/003704_LT.tif

/home/aistudio/train_dataset/初赛训练_GF/015393_GF.tif /home/aistudio/train_dataset/初赛训练_LT/015393_LT.tif

/home/aistudio/train_dataset/初赛训练_GF/004227_GF.tif /home/aistudio/train_dataset/初赛训练_LT/004227_LT.tif

from PIL import Image

img=Image.open('/home/aistudio/train_dataset/初赛训练_GF/003938_GF.tif')

print(img.size)

img

(256, 256)

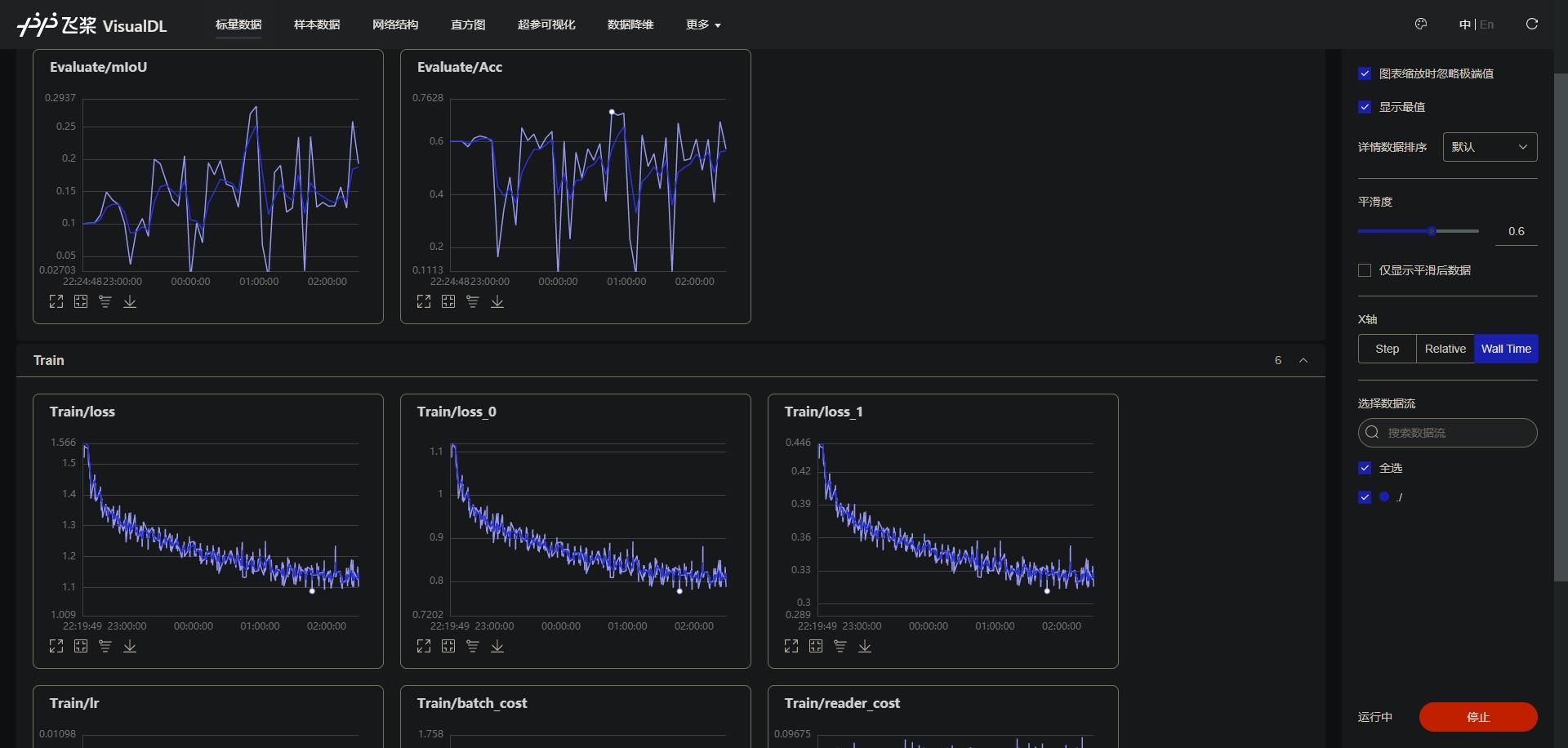

四、模型训练

从数据集分析结果来看,各个类别像素占整个图片的面积比例很小

具体训练配置如下remotesensing.yml

batch_size: 60

iters: 150000

train_dataset:

type: Dataset

dataset_root: /home/aistudio

train_path: /home/aistudio/train_dataset/train_list.txt

num_classes: 6

transforms:

- type: ResizeStepScaling

min_scale_factor: 0.75

max_scale_factor: 1.5

scale_step_size: 0.25

- type: RandomHorizontalFlip

- type: RandomVerticalFlip

- type: RandomRotation

max_rotation: 30

- type: RandomDistort

brightness_range: 0.2

contrast_range: 0.2

saturation_range: 0.2

- type: Normalize

- type: Resize

target_size: [256, 256]

mode: train

val_dataset:

type: Dataset

dataset_root: /home/aistudio

val_path: /home/aistudio/train_dataset/val_list.txt

num_classes: 6

transforms:

- type: Resize

target_size: [256, 256]

- type: Normalize

mode: val

optimizer:

type: sgd

momentum: 0.9

weight_decay: 4.0e-5

lr_scheduler:

type: PolynomialDecay

learning_rate: 0.01

end_lr: 0

power: 0.9

loss:

types:

- type: CrossEntropyLoss

coef: [1, 0.4]

model:

type: OCRNet

backbone:

type: HRNet_W48

align_corners: False

pretrained: https://bj.bcebos.com/paddleseg/dygraph/hrnet_w48_ssld.tar.gz

backbone_indices: [-1]

pretrained: https://bj.bcebos.com/paddleseg/dygraph/ccf/fcn_hrnetw48_rs_256x256_160k/model.pdparams

%cd ~/PaddleSeg/

!python train.py \

--config ../remotesensing.yml \

--do_eval \

--use_vdl \

--save_interval 100 \

--save_dir output_ocrnet

五、模型预测

- 因为图片后缀为.tif,所以对

PaddleSeg/paddleseg/utils/utils.py进行了修改,增加.tif格式 - 模型预测时希望将预测的结果直接作为提交结果,但是paddleseg默认预测的结果是增加权重后生成的图片,所以对paddleseg的源码进行了修改。修改的文件为

PaddleSeg/paddleseg/core/predict.py,修改后是下面这段代码

# PaddleSeg/paddleseg/utils/utils.py

def get_image_list(image_path):

"""Get image list"""

valid_suffix = [

'.JPEG', '.jpeg', '.JPG', '.jpg', '.BMP', '.bmp', '.PNG', '.png', '.tif' # 增加tif格式

]

image_list = []

image_dir = None

if os.path.isfile(image_path):

if os.path.splitext(image_path)[-1] in valid_suffix:

image_list.append(image_path)

else:

image_dir = os.path.dirname(image_path)

with open(image_path, 'r') as f:

for line in f:

line = line.strip()

if len(line.split()) > 1:

line = line.split()[0]

image_list.append(os.path.join(image_dir, line))

elif os.path.isdir(image_path):

image_dir = image_path

for root, dirs, files in os.walk(image_path):

for f in files:

if '.ipynb_checkpoints' in root:

continue

if os.path.splitext(f)[-1] in valid_suffix:

image_list.append(os.path.join(root, f))

else:

raise FileNotFoundError(

'`--image_path` is not found. it should be an image file or a directory including images'

)

if len(image_list) == 0:

raise RuntimeError('There are not image file in `--image_path`')

return image_list, image_dir

# 修改predict函数

import os

import math

import cv2

import numpy as np

import paddle

from paddleseg import utils

from paddleseg.core import infer

from paddleseg.utils import logger, progbar, visualize

def mkdir(path):

sub_dir = os.path.dirname(path)

if not os.path.exists(sub_dir):

os.makedirs(sub_dir)

def partition_list(arr, m):

"""split the list 'arr' into m pieces"""

n = int(math.ceil(len(arr) / float(m)))

return [arr[i:i + n] for i in range(0, len(arr), n)]

# 修改predict函数

def predict(model,

model_path,

transforms,

image_list,

image_dir=None,

save_dir='output',

aug_pred=False,

scales=1.0,

flip_horizontal=True,

flip_vertical=False,

is_slide=False,

stride=None,

crop_size=None):

"""

predict and visualize the image_list.

Args:

model (nn.Layer): Used to predict for input image.

model_path (str): The path of pretrained model.

transforms (transform.Compose): Preprocess for input image.

image_list (list): A list of image path to be predicted.

image_dir (str, optional): The root directory of the images predicted. Default: None.

save_dir (str, optional): The directory to save the visualized results. Default: 'output'.

aug_pred (bool, optional): Whether to use mulit-scales and flip augment for predition. Default: False.

scales (list|float, optional): Scales for augment. It is valid when `aug_pred` is True. Default: 1.0.

flip_horizontal (bool, optional): Whether to use flip horizontally augment. It is valid when `aug_pred` is True. Default: True.

flip_vertical (bool, optional): Whether to use flip vertically augment. It is valid when `aug_pred` is True. Default: False.

is_slide (bool, optional): Whether to predict by sliding window. Default: False.

stride (tuple|list, optional): The stride of sliding window, the first is width and the second is height.

It should be provided when `is_slide` is True.

crop_size (tuple|list, optional): The crop size of sliding window, the first is width and the second is height.

It should be provided when `is_slide` is True.

"""

utils.utils.load_entire_model(model, model_path)

model.eval()

nranks = paddle.distributed.get_world_size()

local_rank = paddle.distributed.get_rank()

if nranks > 1:

img_lists = partition_list(image_list, nranks)

else:

img_lists = [image_list]

added_saved_dir = os.path.join(save_dir, 'added_prediction')

pred_saved_dir = os.path.join(save_dir, 'pseudo_color_prediction')

logger.info("Start to predict...")

progbar_pred = progbar.Progbar(target=len(img_lists[0]), verbose=1)

with paddle.no_grad():

for i, im_path in enumerate(img_lists[local_rank]):

im = cv2.imread(im_path)

ori_shape = im.shape[:2]

im, _ = transforms(im)

im = im[np.newaxis, ...]

im = paddle.to_tensor(im)

if aug_pred:

pred = infer.aug_inference(

model,

im,

ori_shape=ori_shape,

transforms=transforms.transforms,

scales=scales,

flip_horizontal=flip_horizontal,

flip_vertical=flip_vertical,

is_slide=is_slide,

stride=stride,

crop_size=crop_size)

else:

pred = infer.inference(

model,

im,

ori_shape=ori_shape,

transforms=transforms.transforms,

is_slide=is_slide,

stride=stride,

crop_size=crop_size)

pred = paddle.squeeze(pred)

pred = pred.numpy().astype('uint8')

# get the saved name

if image_dir is not None:

im_file = im_path.replace(image_dir, '')

else:

im_file = os.path.basename(im_path)

if im_file[0] == '/' or im_file[0] == '\\':

im_file = im_file[1:]

# 修改预测后的图片标签

# 修改label从1开始起算

# 惯性思维用循环了,多谢嘟嘟赐教

pred=pred+1

# for jj in range(256):

# for j in range(256):

# pred[jj][j]=pred[jj][j]+1

pred_saved_path = os.path.join(save_dir, im_file.rsplit(".")[0].strip("_GF") + "_LT.tif")

mkdir(pred_saved_path)

cv2.imwrite(pred_saved_path, pred)

progbar_pred.update(i + 1)

# 模型预测

%cd ~/PaddleSeg/

!python predict.py \

--config ../remotesensing.yml \

--model_path ./output_ocrnet/best_model/model.pdparams \

--image_path /home/aistudio/test_dataset \

--save_dir /home/aistudio/result

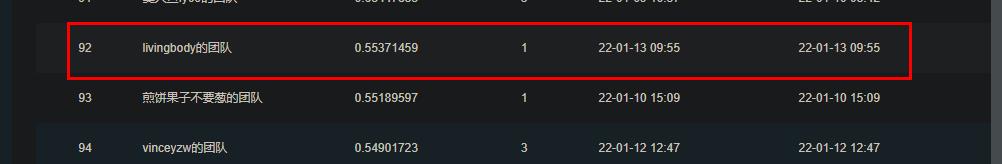

六、提交

整体跑了约30个epoch,打包zip文件夹并提交,即可得分。

如需提分可多花一些时间训练。

使用PaddleSeg实现山东土地集团杯遥感图像语义分割

Jupyter Notebook