Are you sure you want to delete this task? Once this task is deleted, it cannot be recovered.

|

|

6 months ago | |

|---|---|---|

| images | 6 months ago | |

| scripts | 6 months ago | |

| README.md | 6 months ago | |

| extract_ILSVRC.sh | 6 months ago | |

| main.py | 6 months ago | |

| quantization_birder_hook.py | 6 months ago | |

| requirements.txt | 6 months ago | |

README.md

Birder

This implements training with Birder (Birder: Communication-Efficient 1-bit Adaptive Optimizer for Practical Distributed DNN Training) on the ImageNet dataset.

Brief Description

Various gradient compression algorithms have been proposed to alleviate the communication bottleneck in distributed learning, and they have demonstrated effectiveness in terms of high compression ratios and theoretical low communication complexity. However, when it comes to practically training modern deep neural networks (DNNs), these algorithms have yet to match the inference performance of uncompressed SGD-momentum (SGDM) and adaptive optimizers (e.g., Adam). More importantly, recent studies suggest that these algorithms actually offer no speed advantages over SGDM/Adam when used with common distributed DNN training frameworks ( e.g., {DistributedDataParallel (DDP)) in the typical settings, due to heavy compression/decompression computation or incompatibility with the efficient \emph{All-Reduce} or the requirement of uncompressed warmup at the early stage. For these reasons, we propose a novel 1-bit adaptive optimizer, dubbed Binary Randomization adaptive optimizer ( Birder). The quantization of Birder can be easily and lightly computed, and it does not require warmup with its uncompressed version in the beginning. Also, we devise Hierarchical-1-bit-All-Reduce to further lower the communication volume. We theoretically prove that it promises the same convergence rate as the Adam Extensive experiments, conducted on 8 to 64 GPUs (1 to 8 nodes) using DDP, demonstrate that Birder achieves comparable inference performance to uncompressed SGDM/Adam, with up to 2.5 speedup for training ResNet-50 and 6.3 speedup for training BERT-Base.

Requirements

- Install PyTorch (pytorch.org)

pip install -r requirements.txt- Download the ImageNet dataset from http://www.image-net.org/

- Then, move and extract the training and validation images to labeled subfolders, using the following shell script

Distributed Data Parallel Training Training With Birder

To train a model with Birder, run main.py with the desired model architecture and the path to the ImageNet dataset, using the following shell script (4 nodes, 8 GGPU per node):

python main.py -a resnet50 --dist-url 'tcp://IP_OF_NODE0:FREEPORT' --dist-backend 'nccl' --multiprocessing-distributed --world-size 4 --rank 0 --batch-size 256 --epochs 100 --lr 0.004 --weight-decay 0.1 --birder --comp_flag --hierarchy --all_gather_by_chunks --warm_up_epochs 10 --beta 0.95 --log_dir [LOG_PATH] [imagenet-folder]

python main.py -a resnet50 --dist-url 'tcp://IP_OF_NODE0:FREEPORT' --dist-backend 'nccl' --multiprocessing-distributed --world-size 4 --rank 1 --batch-size 256 --epochs 100 --lr 0.004 --weight-decay 0.1 --birder --comp_flag --hierarchy --all_gather_by_chunks --warm_up_epochs 10 --beta 0.95 --log_dir [LOG_PATH] [imagenet-folder]

python main.py -a resnet50 --dist-url 'tcp://IP_OF_NODE0:FREEPORT' --dist-backend 'nccl' --multiprocessing-distributed --world-size 4 --rank 2 --batch-size 256 --epochs 100 --lr 0.004 --weight-decay 0.1 --birder --comp_flag --hierarchy --all_gather_by_chunks --warm_up_epochs 10 --beta 0.95 --log_dir [LOG_PATH] [imagenet-folder]

python main.py -a resnet50 --dist-url 'tcp://IP_OF_NODE0:FREEPORT' --dist-backend 'nccl' --multiprocessing-distributed --world-size 4 --rank 3 --batch-size 256 --epochs 100 --lr 0.004 --weight-decay 0.1 --birder --comp_flag --hierarchy --all_gather_by_chunks --warm_up_epochs 10 --beta 0.95 --log_dir [LOG_PATH] [imagenet-folder]

The default learning rate schedule starts at 0.004 and decays by a factor of 10 every 30 epochs.

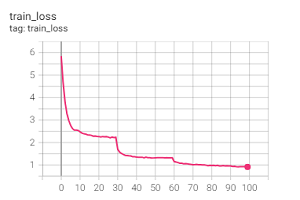

Training Loss with Birder in 4 nodes * 8 gpus

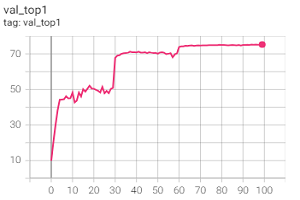

Val Top1 with Birder in 4 nodes * 8 gpus

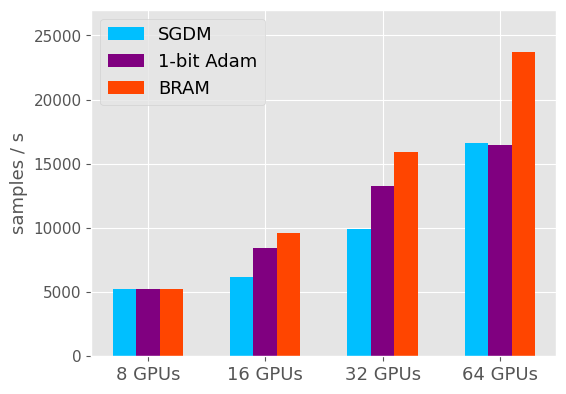

Communication Efficiency Comparison

ethernet

To compare communication efficiency in ethernet, run main.py with --net_type 'ethernet', using the following 4 ndoes scripts:

scripts/comm_node4_ethernet_rank0.sh

scripts/comm_node4_ethernet_rank1.sh

scripts/comm_node4_ethernet_rank2.sh

scripts/comm_node4_ethernet_rank3.sh

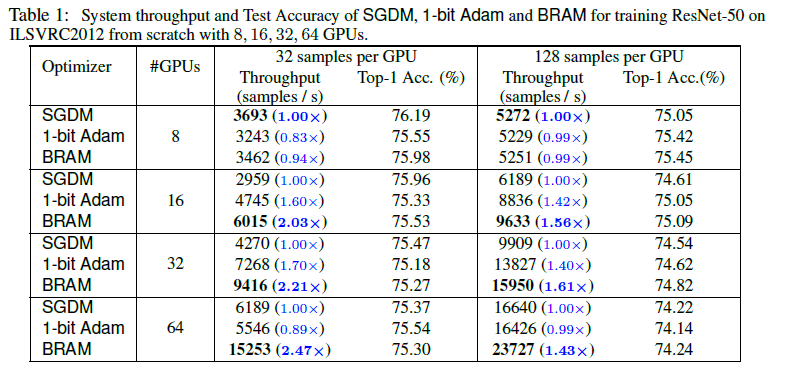

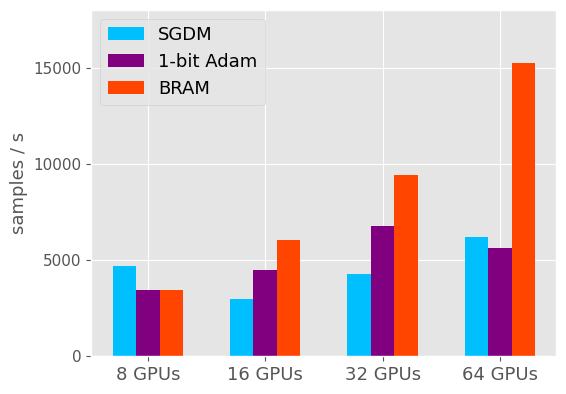

ethernet comparison results

ethernet system throughput

32 samples per GPU

128 samples per GPU

ibnet

To compare communication efficiency in infiniBand, run main.py with --net_type 'ibnet', using the following 4 ndoes scripts:

scripts/comm_node4_ibnet_rank0.sh

scripts/comm_node4_ibnet_rank1.sh

scripts/comm_node4_ibnet_rank2.sh

scripts/comm_node4_ibnet_rank3.sh

Citing Birder

@inproceedings{birder2023,

title={Birder: Communication-Efficient 1-bit Adaptive Optimizer for Practical Distributed DNN Training},

author={Hanyang Peng, Shuang Qin, Yue Yu, Jin Wang, Hui Wang, Ge Li},

booktitle={Advances in Neural Information Processing Systems},

year={2023}

}