Are you sure you want to delete this task? Once this task is deleted, it cannot be recovered.

|

|

2 years ago | |

|---|---|---|

| agents/policy_gradient | 2 years ago | |

| envs | 2 years ago | |

| miscs | 2 years ago | |

| original_papers | 2 years ago | |

| utils | 2 years ago | |

| README.md | 2 years ago | |

| test_agent.py | 2 years ago | |

| test_dummy.py | 2 years ago | |

| test_math.py | 2 years ago | |

| test_monitor.py | 2 years ago | |

| test_pipe.py | 2 years ago | |

| test_subproc.py | 2 years ago | |

README.md

PCL-RLZoo

This is an ensemble of RL algorithm implementations under development. We currently call it as PCL-RLZoo because the project is supported by Peng Cheng Lab. We expect it to be compatible for multiple deep learning toolboxes (tensorflow, torch, tensorlayer, mindspore...) and hoping it can really become a zoo full of RL algorithms.

Currently Supported Agents

Stable

Unfortunately, at present, no algorithms experienced large-scale empirical tests.

Beta

- Vanilla Policy Gradient - VPG (tensorflow)

- Natural Policy Gradient - NPG (tensorflow)

- Advantage Actor Critic - A2C (tensorflow)

- Trust Region Policy Optimization - TRPO (tensorflow)

- Proximal Policy Optimization - PPO (tensorflow)

Used Tricks

- Vectorized Environment

- Multi-processing Training

- Generalized Advantage Estimation

- Observation Normalization

- Reward Normalization

- Advantage Normalization

- Gradient Clipping

You can block any last five tricks as you like by changing the default parameters in functions.

Basic Usage

The following four lines of code are enough to start training an RL agent. The template is shown in test_agent.py.

# trying with toy environments or mujoco environments

make_env_fns = [make_env_fn("CartPole-v0",i) for i in range(8)]

envs = DummyVecEnv(make_env_fns)

# choose an agent you like

agent = TRPO_TF_Agent(envs)

agent.train_agent(init_steps=2000,num_steps=10000)

As our project support multiprocess communication by mpi4py, so you can run with the following command to start training with K sub-process.

mpiexec -n K python test_agent.py

You can also launch the training regularly as

python test_agent.py

Customize Usage

- If you want to train an RL agent in your own environments, you can write an environment wrapper and implement the core function reset() and step(action) and add it in make_env_funcs.py file. The environment template is shown in "./envs/wrappers/xxx_wrappers.py".

- If you want to train an agent with some novel network architecture, you can modify content in the function define_network in the xxx_agent.py file in "agents/xxx/xxx_xx_agent". (Hints: Better not playing with the content in define_optimization() function.)

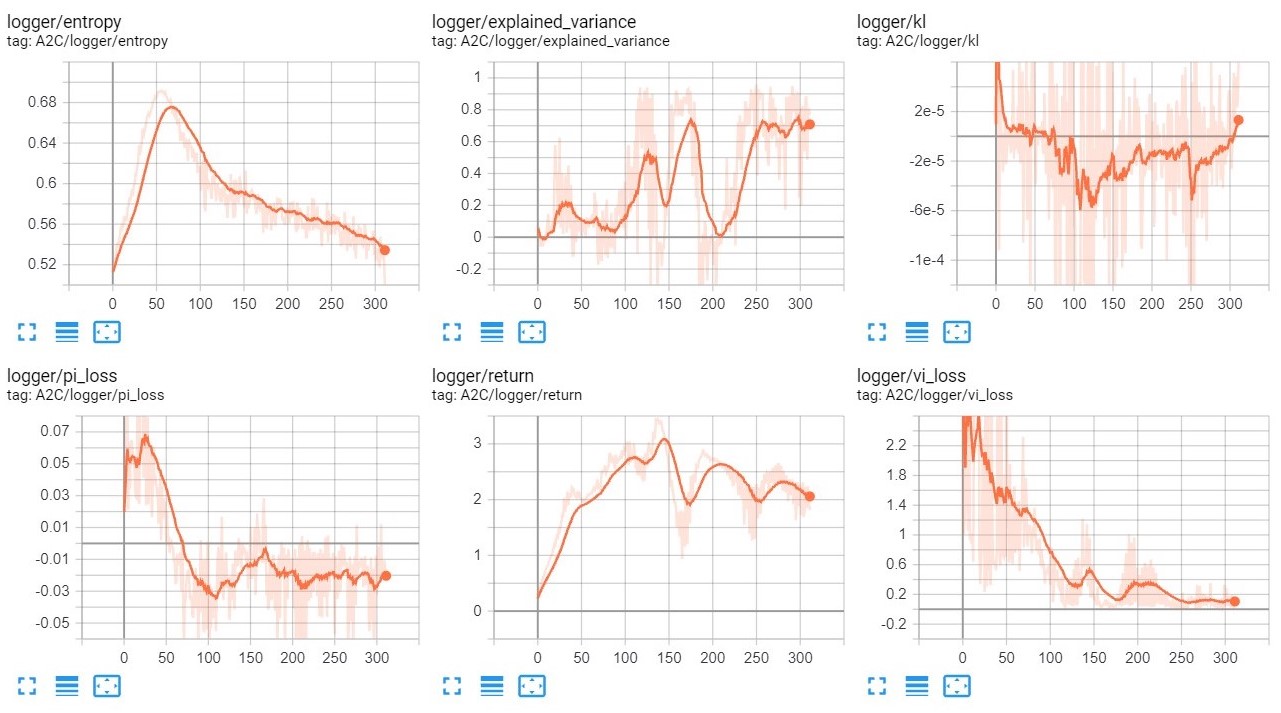

Logger

You can use tensorboard to visualize what happened in the training process. After training, the log file will be automatically generated in the directory ".results/" and you should be able to see some training data after running the command.

tensorboard --logdir ./results/

If everything going well, you should get a similar display like below.

To visualize the training scores, training times and the performance, you need to initialize the environment as

env = MonitorVecEnv(DummyVecEnv(...))

then, after training terminated, two extra files "xxx.npy" and "xxx.gif" will be generated in the "./results/" directory. The "xxx.npy" record the scores and clock time for each episode in training. But we haven't provided a plotter.py to draw the curves for this.